The Volatility Cone: A Quant's Tool for Mapping Price Uncertainty

How Jennifer Aniston’s LolaVie brand grew sales 40% with CTV ads

The DTC beauty category is crowded. To break through, Jennifer Aniston’s brand LolaVie, worked with Roku Ads Manager to easily set up, test, and optimize CTV ad creatives. The campaign helped drive a big lift in sales and customer growth, helping LolaVie break through in the crowded beauty category.

② One strategy in this book returned 2.3× the S&P 500 on a risk-adjusted basis over 5 years.

Fully coded in Python. Yours to run today.

The 2026 Playbook — 30+ backtested strategies,

full code included, ready to deploy.

20% off until Tuesday. Use APRIL2026 at checkout.

$79 → $63.20 · Expires April 7.

→ Grab it before Tuesday

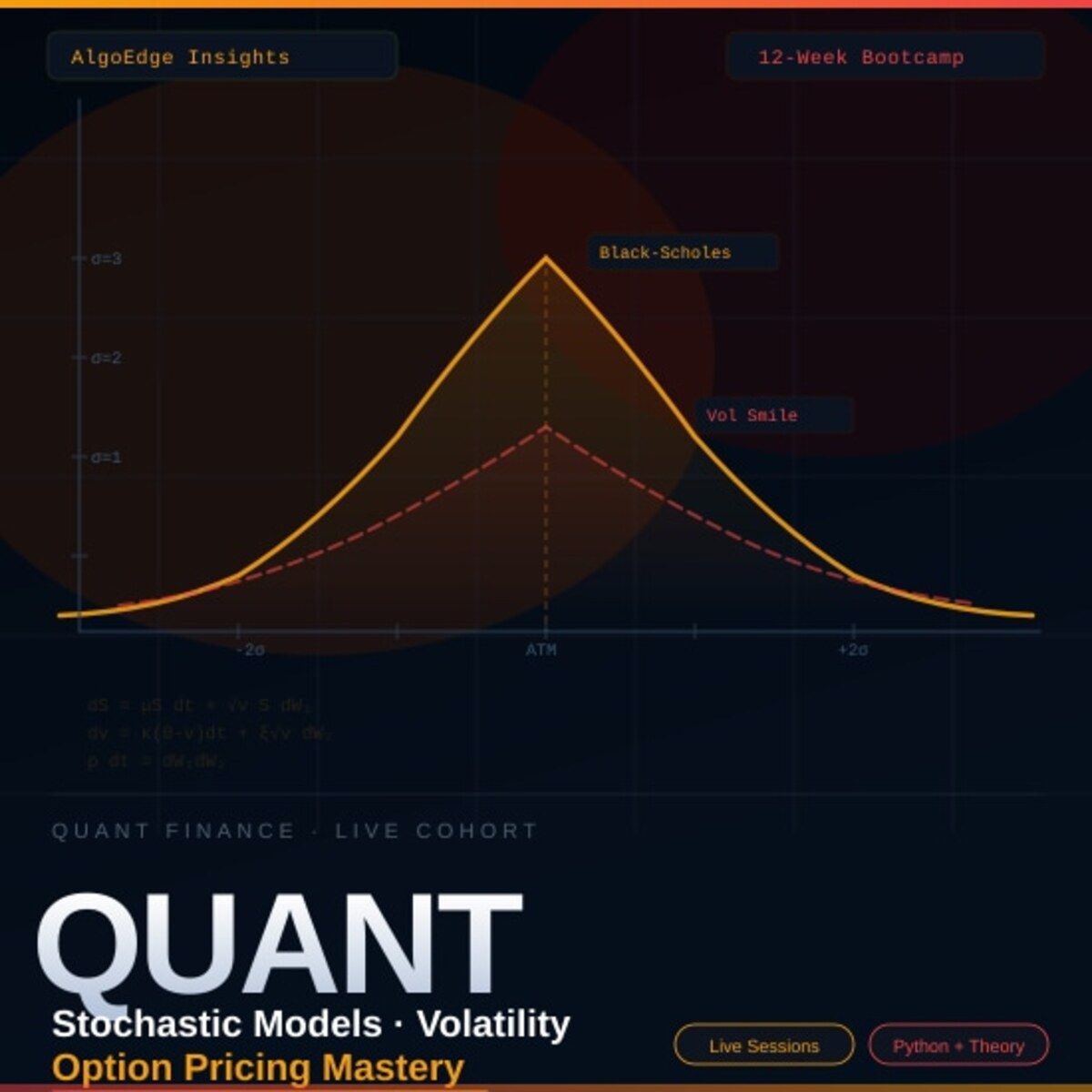

⑤ Most quant courses teach you to watch. This one makes you build.

Live. Weekly. With feedback on your actual code.

The AlgoEdge Quant Finance Bootcamp — 12 weeks of stochastic models, Black-Scholes, Heston, volatility surfaces, and exotic options. Built from scratch in Python.

Not pre-recorded. Not self-paced. Live sessions, weekly homework, direct feedback, and a full code library that's yours to keep.

Cohort size is limited intentionally — so every question gets answered.

→ Before you enroll, reach out for a 15-minute fit check. No pitch, no pressure.

📩 Email first: [email protected]

“Outperforming the market with low volatility on a consistent basis is an impossibility. I outperformed the market for 30-odd years, but not with low volatility.”- George Soros 💯 👏

Synopsis: This paper is about advanced volatility modeling by combining return bootstrapping with self-exciting jump dynamics across stocks, ETFs, indices, and BTC for market microstructure analysis and Monte Carlo scenario testing.

cf. TOC 📝

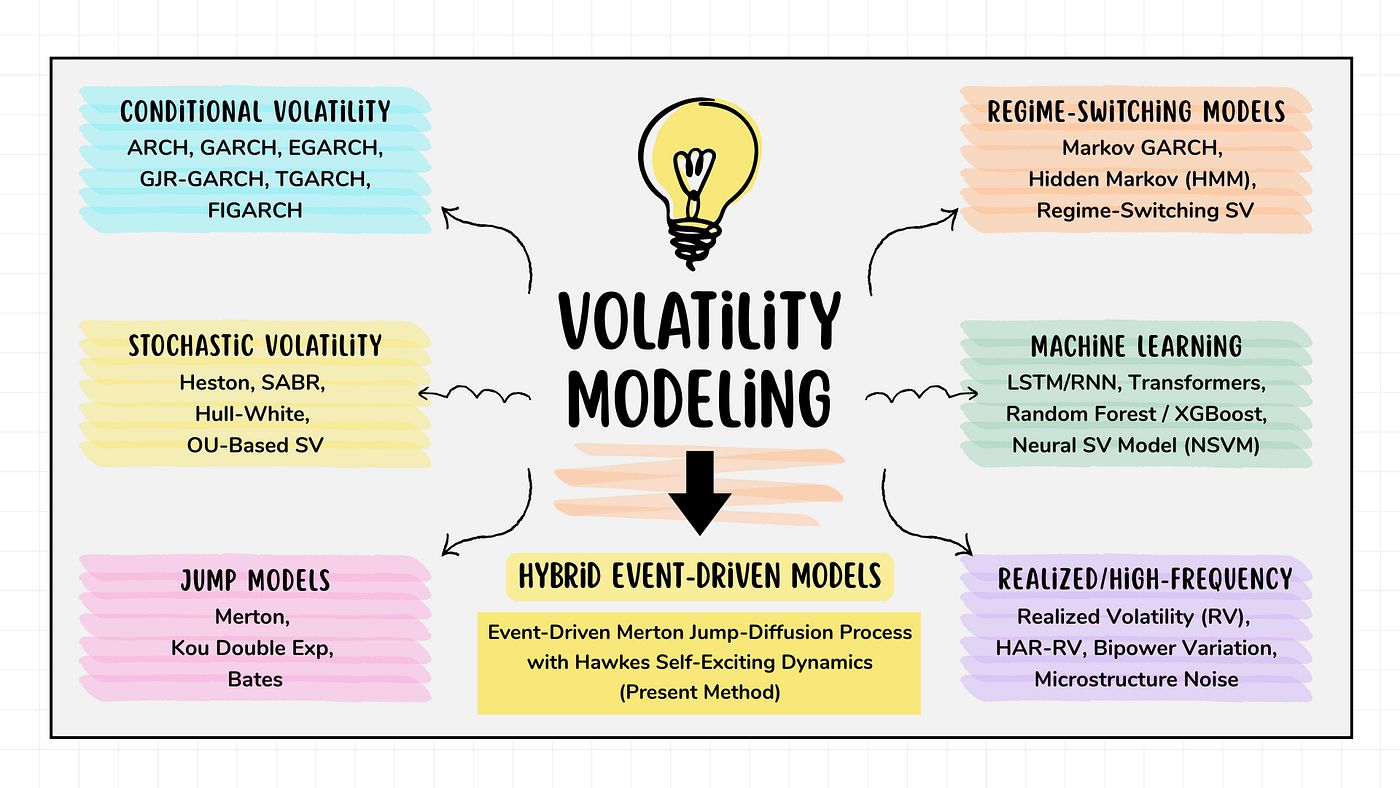

Volatility Modeling Flow Chart (Author’s Illustration, Created with Canva)

👋 😃 Hello everyone! In this post we explore how an event-driven Merton jump-diffusion model [4] enhanced with Hawkes process [5] can capture volatility clustering [3] in intraday (1-minute) multi-asset markets represented by Tech Stocks, ETFs, Indices [1], and BTC-USD [2].

Geometric Brownian Motion (GBM) is a widely used baseline model for asset prices with the assumption of their constant volatilities. It assumes log returns are normally distributed. This model simulates only smooth diffusive movements and ignores sudden large shocks. GBM treats each price step as dependent solely on the current price, with no memory of past jumps (Markov property). For example, the famous Black-Scholes model for option pricing is based on GBM. It fails to capture fat tails and volatility clustering observed in real markets.

GARCH (Generalized Autoregressive Conditional Heteroskedasticity) family represents volatility as stochastic and time-varying, capturing conditional heteroskedasticity in financial returns. While it does not explicitly incorporate jumps, extreme returns influence future volatility, allowing the framework to capture volatility clustering through the ARCH effect. Returns are assumed conditionally normal, though fat tails are often observed in practice. Its strengths include accurately representing time-varying volatility and empirical return behavior, making it useful for risk management, Value at Risk (VaR), and volatility forecasting. Its limitations are the absence of explicit price diffusion and jumps, relying solely on conditional variance.

The Heston model describes volatility as stochastic and mean-reverting, with a volatility process tending toward a long-term level. It does not include jumps, so price paths are continuous, but volatility exhibits autocorrelation through mean reversion. As a result, the distribution of prices is non-lognormal due to stochastic variance. Its strengths include capturing the volatility smile, stochastic volatility, and mean-reverting behavior, making it ideal for option pricing and realistic derivative modeling. However, it is more complex, and closed-form option pricing typically requires characteristic functions.

The Merton Jump-Diffusion model [4] combines constant diffusive volatility with Poisson-driven jumps, where jump sizes follow a lognormal distribution. It assumes no memory or autocorrelation, treating jumps as independent and the process as Markovian. Price distributions are primarily lognormal but include occasional large jumps, producing fat tails. This approach is valuable for modeling sudden shocks and extreme events, offering a more realistic alternative to GBM, and is commonly used in risk management and option pricing, though it does not capture volatility clustering and requires careful calibration of jump parameters.

The Hawkes-driven Merton Jump-Diffusion model [3], which forms the basis of the present approach, combines empirical diffusion [4] through bootstrapped log returns with jump-driven volatility [5]. Jumps are self-exciting, meaning that the occurrence of one jump increases the likelihood of subsequent jumps. Memory and autocorrelation are incorporated via the Hawkes intensity [5], capturing both volatility clustering and jump clustering. Price distributions exhibit fat tails, clustered jumps, and stochastic paths generated through Monte Carlo simulation. This framework effectively captures both jumps and clustering, producing probabilistic multi-path outputs, though it requires historical jump data and is more computationally intensive. It is particularly suited for intraday multi-asset modeling, stress testing, market microstructure analysis, and Monte Carlo scenario generation.

Let’s get to the bottom of this!🚀🔍📊✨

Your ads ran overnight. Nobody was watching. Except Viktor.

One brand built 30+ landing pages through Viktor without a single developer.

Each page mapped to a specific ad group. All deployed within hours. Viktor wrote the code and shipped every one from a Slack message.

That same team has Viktor monitoring ad accounts across the portfolio and posting performance briefs before the day starts. One colleague. Always on. Across every account.

Contents 📝

· Intraday Multi-Asset Data: Load, Visualize, Analyze

· Merton–Hawkes Monte Carlo Simulations

· Conclusions 🎯

· References 📚

· Explore More 🌟

· Contacts 📬

· Disclaimer 📜

Intraday Multi-Asset Data: Load, Visualize, Analyze

In this section, we develop a structured workflow for loading, preprocessing, analyzing, and visualizing intraday data across a multi-asset universe, enabling consistent exploration of market dynamics and volatility patterns.

This constitutes a key component of the ETL (Extract–Transform–Load) pipeline.

We begin by loading sixteen 1-minute multi-asset datasets [1, 2]

import pandas as pd

import numpy as np

def load_intraday_data(file_path):

"""

Load intraday OHLC CSV and return DataFrame with datetime index.

"""

df = pd.read_csv(file_path)

# Convert timestamp

df["timestamp"] = pd.to_datetime(df["timestamp"])

# Sort and set index

df = df.sort_values("timestamp")

df = df.set_index("timestamp")

return df.copy()

def load_txt_ohlc(

file_path,

add_volume=True

):

"""

Load OHLC data from a .txt file (no header) and return cleaned DataFrame.

Parameters:

----------

file_path : str

Path to the .txt file

add_volume : bool

If True, adds a NaN volume column

Returns:

-------

df : pd.DataFrame

DataFrame with datetime index and OHLC (+ volume)

"""

# Read file

df = pd.read_csv(

file_path,

header=None,

names=['timestamp', 'open', 'high', 'low', 'close', 'volume'],

parse_dates=['timestamp']

)

# Add volume if needed

if add_volume:

df['volume'] = np.nan

# Reorder columns

df = df[['timestamp', 'open', 'high', 'low', 'close', 'volume']]

# Sort and set index

df = df.sort_values("timestamp")

df = df.set_index("timestamp")

return df.copy()

def load_crypto_gzip(

file_path,

timestamp_unit="s"

):

"""

Load compressed OHLC crypto data and return cleaned DataFrame.

Parameters:

----------

file_path : str

Path to .csv.gz file

timestamp_unit : str

Unit of timestamp ('s' for seconds, 'ms' for milliseconds)

Returns:

-------

df : pd.DataFrame

DataFrame with datetime index

"""

# Load compressed CSV

df = pd.read_csv(

file_path,

compression="gzip"

)

# Convert timestamp

if "timestamp" not in df.columns:

raise ValueError("CSV must contain 'timestamp' column")

df["timestamp"] = pd.to_datetime(df["timestamp"], unit=timestamp_unit)

# Sort and set index

df = df.sort_values("timestamp")

df = df.set_index("timestamp")

return df.copy()

#Stocks

df_msft = load_intraday_data("MSFT_1min_firstratedata.csv")

df_aapl = load_intraday_data("AAPL_1min_firstratedata.csv")

df_meta = load_intraday_data("META_1min_firstratedata.csv")

df_amzn = load_intraday_data("AMZN_1min_firstratedata.csv")

df_tsla = load_intraday_data("TSLA_1min_firstratedata.csv")

#ETFs

df_spy = load_intraday_data("SPY_1min_firstratedata.csv")

df_qqq = load_intraday_data("QQQ_1min_firstratedata.csv")

df_vxx = load_intraday_data("VXX_1min_firstratedata.csv")

df_dia = load_intraday_data("DIA_1min_firstratedata.csv")

df_eem = load_intraday_data("EEM_1min_firstratedata.csv")

#Indices

df_spx = load_txt_ohlc("SPX_full_1min.txt")

df_dji = load_txt_ohlc("DJI_full_1min.txt")

df_vix = load_txt_ohlc("VIX_full_1min.txt")

df_ndx = load_txt_ohlc("NDX_full_1min.txt")

df_rut = load_txt_ohlc("RUT_full_1min.txt")

#BTC-USD

df_btc = load_crypto_gzip("btcusd_bitstamp_1min_2012-2025.csv.gz")Next, we take a closer look at the general structure of the datasets

#Stocks

df_msft.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 158999 entries, 2022-09-30 04:00:00 to 2023-09-29 19:55:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 158999 non-null float64

1 high 158999 non-null float64

2 low 158999 non-null float64

3 close 158999 non-null float64

4 volume 158999 non-null int64

dtypes: float64(4), int64(1)

memory usage: 7.3 MB

df_meta.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 162279 entries, 2022-09-30 04:00:00 to 2023-09-29 19:55:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 162279 non-null float64

1 high 162279 non-null float64

2 low 162279 non-null float64

3 close 162279 non-null float64

4 volume 162279 non-null int64

dtypes: float64(4), int64(1)

memory usage: 7.4 MB

df_amzn.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 193619 entries, 2022-09-30 04:00:00 to 2023-09-29 19:50:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 193619 non-null float64

1 high 193619 non-null float64

2 low 193619 non-null float64

3 close 193619 non-null float64

4 volume 193619 non-null int64

dtypes: float64(4), int64(1)

memory usage: 8.9 MB

df_tsla.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 230816 entries, 2022-09-30 04:00:00 to 2023-09-29 19:58:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 230816 non-null float64

1 high 230816 non-null float64

2 low 230816 non-null float64

3 close 230816 non-null float64

4 volume 230816 non-null int64

dtypes: float64(4), int64(1)

memory usage: 10.6 MB

df_aapl.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 193829 entries, 2022-09-30 04:00:00 to 2023-09-29 19:49:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 193829 non-null float64

1 high 193829 non-null float64

2 low 193829 non-null float64

3 close 193829 non-null float64

4 volume 193829 non-null int64

dtypes: float64(4), int64(1)

memory usage: 8.9 MB

#ETFs

df_spy.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 207824 entries, 2022-09-30 04:00:00 to 2023-09-29 19:48:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 207824 non-null float64

1 high 207824 non-null float64

2 low 207824 non-null float64

3 close 207824 non-null float64

4 volume 207824 non-null int64

dtypes: float64(4), int64(1)

memory usage: 9.5 MB

df_qqq.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 210482 entries, 2022-09-30 04:00:00 to 2023-09-29 19:47:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 210482 non-null float64

1 high 210482 non-null float64

2 low 210482 non-null float64

3 close 210482 non-null float64

4 volume 210482 non-null int64

dtypes: float64(4), int64(1)

memory usage: 9.6 MB

df_vxx.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 121604 entries, 2022-09-30 04:13:00 to 2023-09-29 19:59:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 121604 non-null float64

1 high 121604 non-null float64

2 low 121604 non-null float64

3 close 121604 non-null float64

4 volume 121604 non-null float64

dtypes: float64(5)

memory usage: 5.6 MB

df_dia.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 119715 entries, 2022-09-30 04:00:00 to 2023-09-29 17:22:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 119715 non-null float64

1 high 119715 non-null float64

2 low 119715 non-null float64

3 close 119715 non-null float64

4 volume 119715 non-null int64

dtypes: float64(4), int64(1)

memory usage: 5.5 MB

df_eem.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 113256 entries, 2022-09-30 04:21:00 to 2023-09-29 16:36:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 113256 non-null float64

1 high 113256 non-null float64

2 low 113256 non-null float64

3 close 113256 non-null float64

4 volume 113256 non-null int64

dtypes: float64(4), int64(1)

memory usage: 5.2 MB

#Indices

df_spx.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 99074 entries, 2022-09-30 09:30:00 to 2023-09-29 16:05:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 99074 non-null float64

1 high 99074 non-null float64

2 low 99074 non-null float64

3 close 99074 non-null float64

4 volume 0 non-null float64

dtypes: float64(5)

memory usage: 4.5 MB

df_dji.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 99069 entries, 2022-09-30 09:30:00 to 2023-09-29 16:05:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 99069 non-null float64

1 high 99069 non-null float64

2 low 99069 non-null float64

3 close 99069 non-null float64

4 volume 0 non-null float64

dtypes: float64(5)

memory usage: 4.5 MB

df_vix.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 191737 entries, 2022-09-30 03:15:00 to 2023-09-29 16:15:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 191737 non-null float64

1 high 191737 non-null float64

2 low 191737 non-null float64

3 close 191737 non-null float64

4 volume 0 non-null float64

dtypes: float64(5)

memory usage: 8.8 MB

df_ndx.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 97933 entries, 2022-09-30 09:30:00 to 2023-09-29 16:00:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 97933 non-null float64

1 high 97933 non-null float64

2 low 97933 non-null float64

3 close 97933 non-null float64

4 volume 0 non-null float64

dtypes: float64(5)

memory usage: 4.5 MB

df_rut.info()

DatetimeIndex: 100058 entries, 2022-09-30 09:30:00 to 2023-09-29 16:09:00

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 open 100058 non-null float64

1 high 100058 non-null float64

2 low 100058 non-null float64

3 close 100058 non-null float64

4 volume 0 non-null float64

dtypes: float64(5)

memory usage: 4.6 MB

#BTC-USD

df_btc.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 6846600 entries, 2012-01-01 10:01:00 to 2025-01-07 00:00:00

Data columns (total 5 columns):

# Column Dtype

--- ------ -----

0 open float64

1 high float64

2 low float64

3 close float64

4 volume float64

dtypes: float64(5)

memory usage: 313.4 MBFinally, we visualize OHLC candlesticks and volume for each dataset using mplfinance:

Helper plotting functions plot_candlestick, plot_index_candles, and plot_crypto_candles

!pip install mplfinance

import mplfinance as mpf

def plot_candlestick(

df,

title="OHLC Candlestick with Volume",

resample_rule="60min",

style="yahoo",

figsize=(12, 6)

):

"""

Plot OHLC candlestick with volume.

Parameters:

- df : DataFrame with columns [open, high, low, close, volume]

- title : Plot title

- resample_rule : e.g. '60min', '5min', '1D' (None to skip resampling)

- style : mplfinance style

- figsize : figure size

"""

# --- Copy and rename columns ---

df_plot = df.copy()

df_plot.columns = ['Open', 'High', 'Low', 'Close', 'Volume']

# --- Ensure datetime index ---

df_plot.index = pd.to_datetime(df_plot.index)

# --- Optional resampling ---

if resample_rule is not None:

df_plot = df_plot.resample(resample_rule).agg({

'Open': 'first',

'High': 'max',

'Low': 'min',

'Close': 'last',

'Volume': 'sum'

})

# Drop NaNs after resampling

df_plot = df_plot.dropna()

# --- Plot ---

mpf.plot(

df_plot,

type='candle',

volume=True,

style=style,

figsize=figsize,

title=title,

ylabel='Price',

ylabel_lower='Volume'

)

def plot_index_candles(

df,

title="Index OHLC Candlestick",

resample_rule="60min",

style="yahoo",

figsize=(12, 6)

):

"""

Plot OHLC candlestick for indices (no volume).

Parameters:

----------

df : DataFrame

Columns: open, high, low, close

title : str

Plot title

resample_rule : str or None

Resampling frequency (e.g. '60min')

style : str

mplfinance style

figsize : tuple

Figure size

"""

# --- Copy & rename ---

df_plot = df.copy()

df_plot = df_plot.rename(columns={

'open': 'Open',

'high': 'High',

'low': 'Low',

'close': 'Close'

})

# --- Ensure datetime index ---

df_plot.index = pd.to_datetime(df_plot.index)

# --- Resample ---

if resample_rule is not None:

df_plot = df_plot.resample(resample_rule).agg({

'Open': 'first',

'High': 'max',

'Low': 'min',

'Close': 'last'

})

# --- Clean ---

df_plot = df_plot.dropna()

# --- Plot (NO volume) ---

mpf.plot(

df_plot,

type='candle',

volume=False, # explicitly disabled

style=style,

figsize=figsize,

title=title,

ylabel='Price'

)

def plot_crypto_candles(

df,

sample_size=50_000,

resample_rule="60min",

style="yahoo",

title="Crypto OHLC Candlestick",

figsize=(12, 6)

):

# --- Copy & standardize ---

df_plot = df.copy()

df_plot = df_plot.rename(columns={

'open': 'Open',

'high': 'High',

'low': 'Low',

'close': 'Close',

'volume': 'Volume'

})

# --- Ensure datetime index ---

df_plot.index = pd.to_datetime(df_plot.index)

# --- Resample ---

df_plot = df_plot.resample(resample_rule).agg({

'Open': 'first',

'High': 'max',

'Low': 'min',

'Close': 'last',

'Volume': 'sum'

})

# --- Clean ---

df_plot = df_plot.dropna()

# --- Sample safely ---

if sample_size is not None:

sample_size = min(sample_size, len(df_plot))

df_plot = df_plot.tail(sample_size)

# --- Plot (volume panel below) ---

mpf.plot(

df_plot,

type='candle',

volume=True, # ensures volume panel below

style=style,

figsize=figsize,

title=title,

ylabel='Price',

ylabel_lower='Volume',

panel_ratios=(3, 1), # OHLC bigger, volume smaller

tight_layout=True

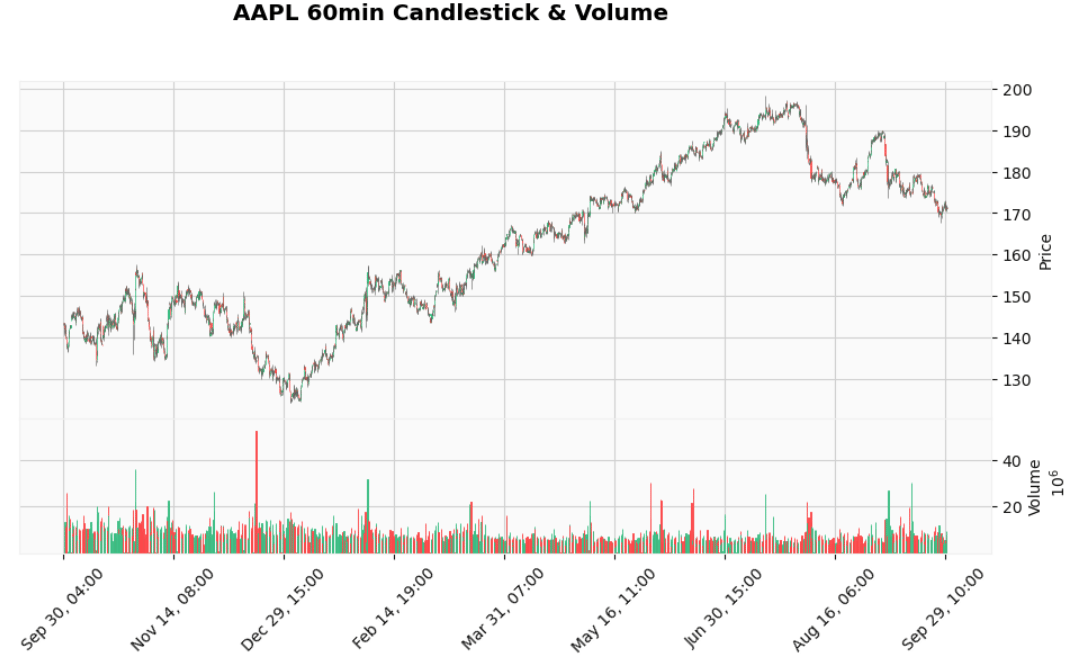

)plot_candlestick(df_aapl, title="AAPL 60min Candlestick & Volume")

AAPL 60min Candlestick & Volume

Turn AI into Your Income Engine

Ready to transform artificial intelligence from a buzzword into your personal revenue generator

HubSpot’s groundbreaking guide "200+ AI-Powered Income Ideas" is your gateway to financial innovation in the digital age.

Inside you'll discover:

A curated collection of 200+ profitable opportunities spanning content creation, e-commerce, gaming, and emerging digital markets—each vetted for real-world potential

Step-by-step implementation guides designed for beginners, making AI accessible regardless of your technical background

Cutting-edge strategies aligned with current market trends, ensuring your ventures stay ahead of the curve

Download your guide today and unlock a future where artificial intelligence powers your success. Your next income stream is waiting.

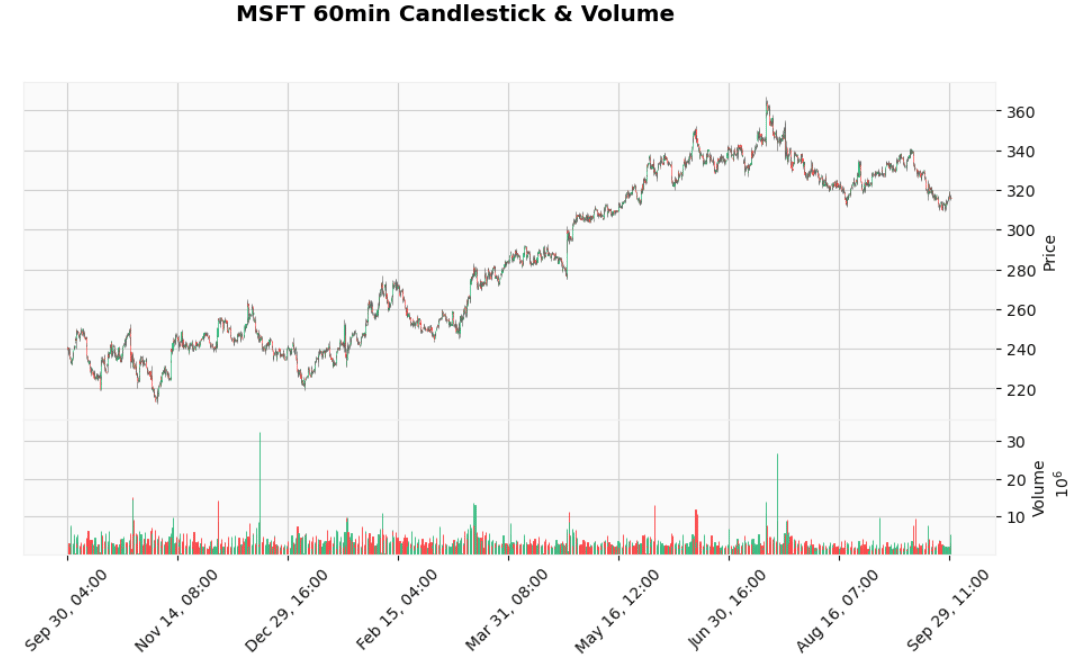

plot_candlestick(df_msft, title="MSFT 60min Candlestick & Volume")

MSFT 60min Candlestick & Volume

plot_candlestick(df_meta, title="META 60min Candlestick & Volume")

META 60min Candlestick & Volume

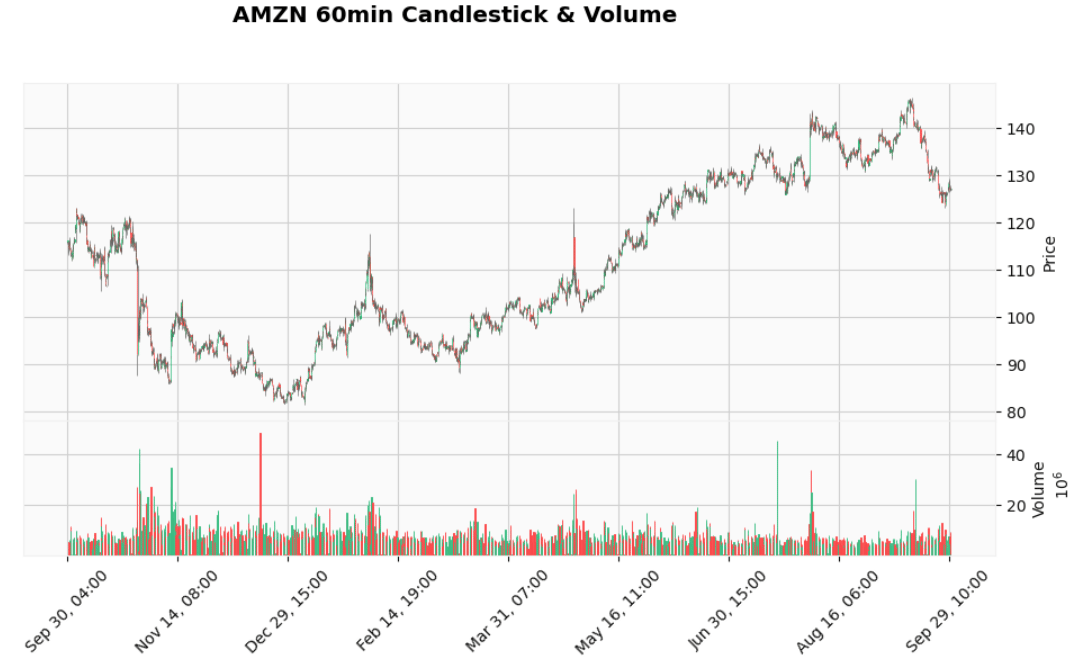

plot_candlestick(df_amzn, title="AMZN 60min Candlestick & Volume")

AMZN 60min Candlestick & Volume

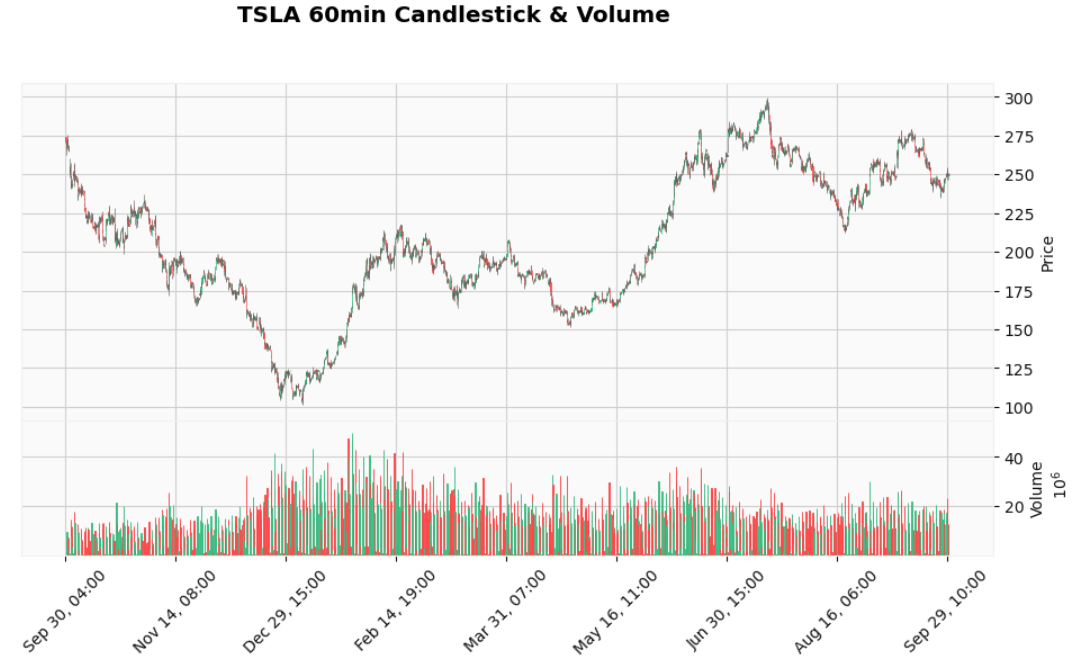

plot_candlestick(df_tsla, title="TSLA 60min Candlestick & Volume")

TSLA 60min Candlestick & Volume

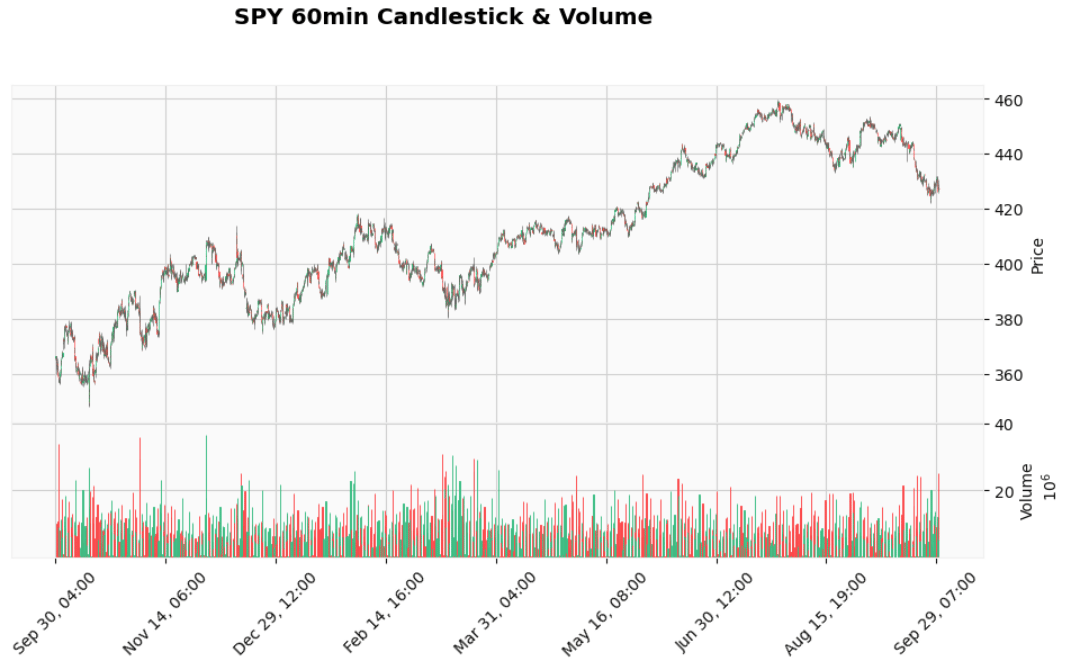

SPY (SPDR S&P 500)

plot_candlestick(df_spy, title="SPY 60min Candlestick & Volume")

SPY 60min Candlestick & Volume

QQQ (Invesco QQQ Trust)

plot_candlestick(df_qqq, title="QQQ 60min Candlestick & Volume")

QQQ 60min Candlestick & Volume

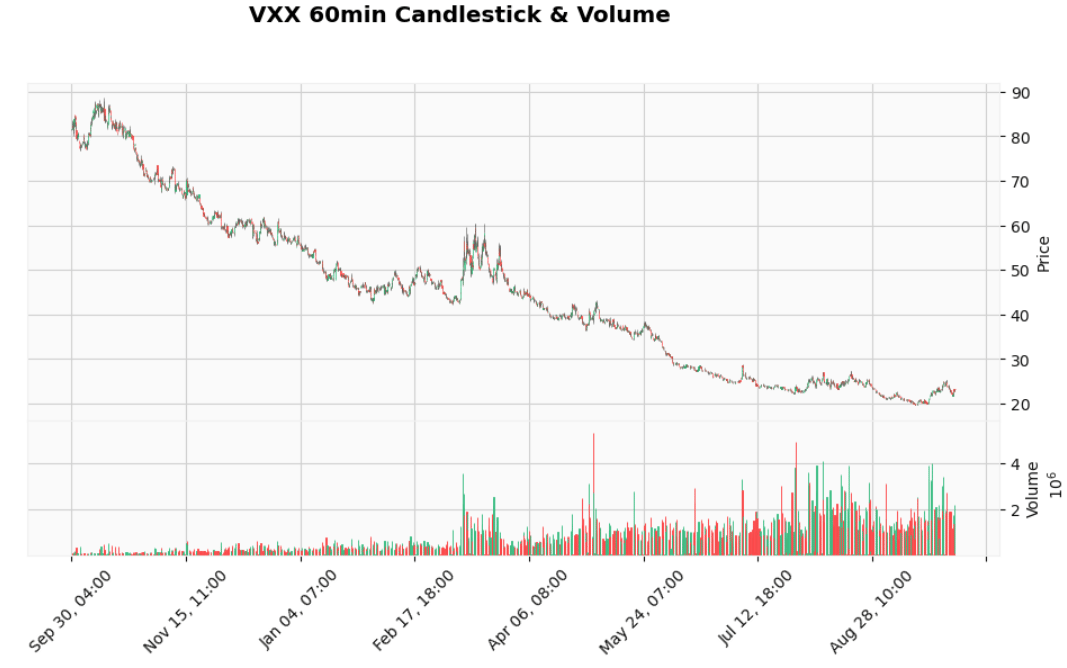

plot_candlestick(df_vxx, title="VXX 60min Candlestick & Volume")

VXX 60min Candlestick & Volume

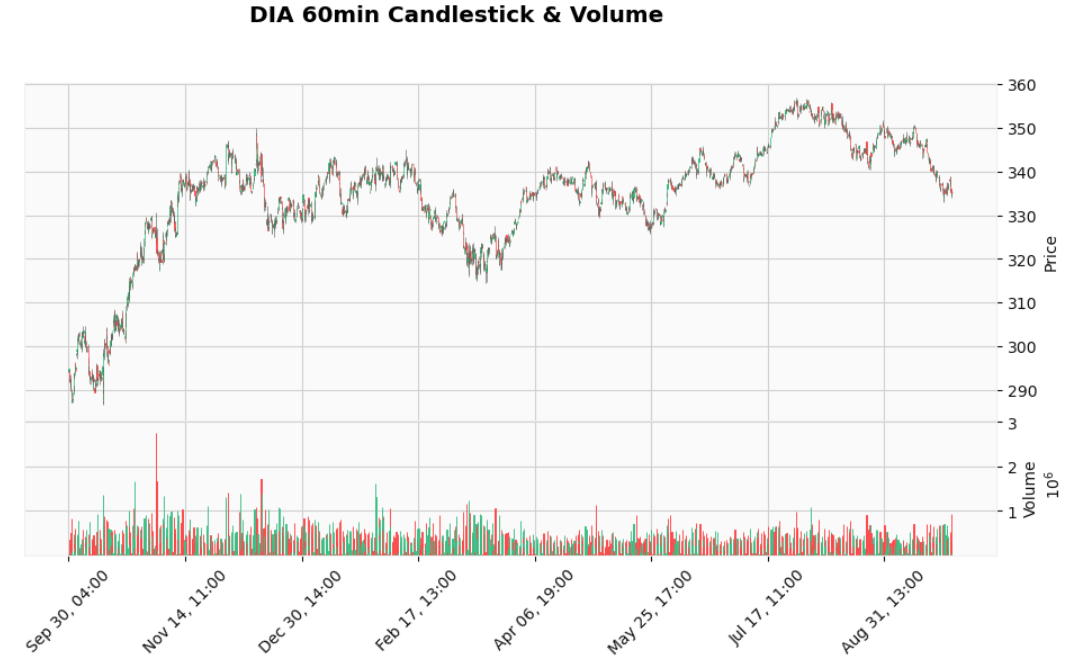

plot_candlestick(df_dia, title="DIA 60min Candlestick & Volume")

DIA 60min Candlestick & Volume

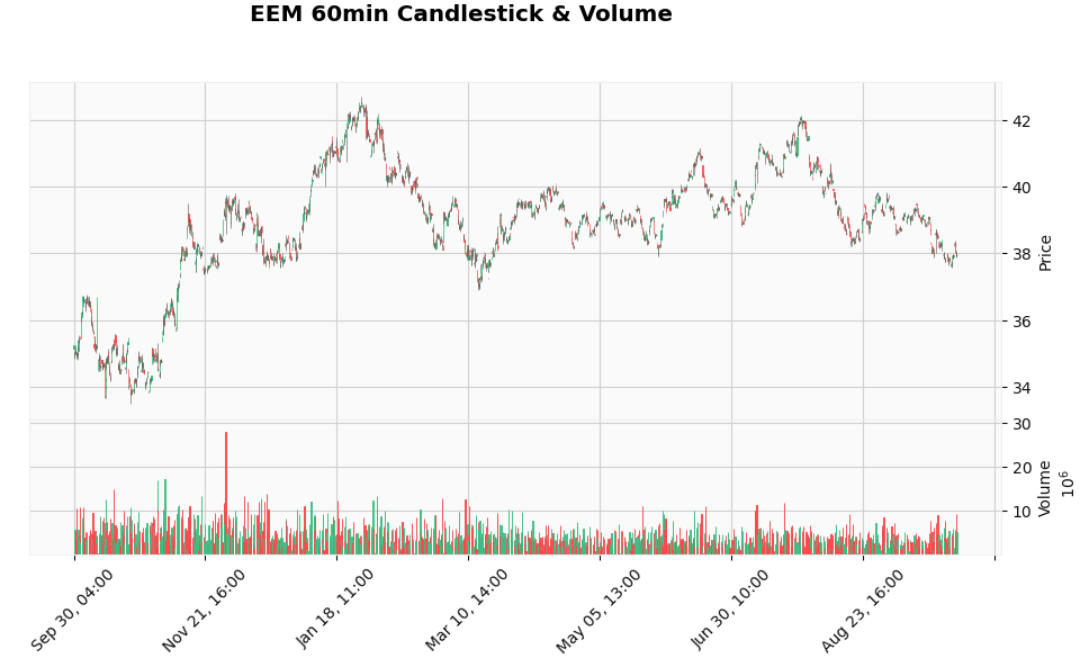

plot_candlestick(df_eem, title="EEM 60min Candlestick & Volume")

EEM 60min Candlestick & Volume

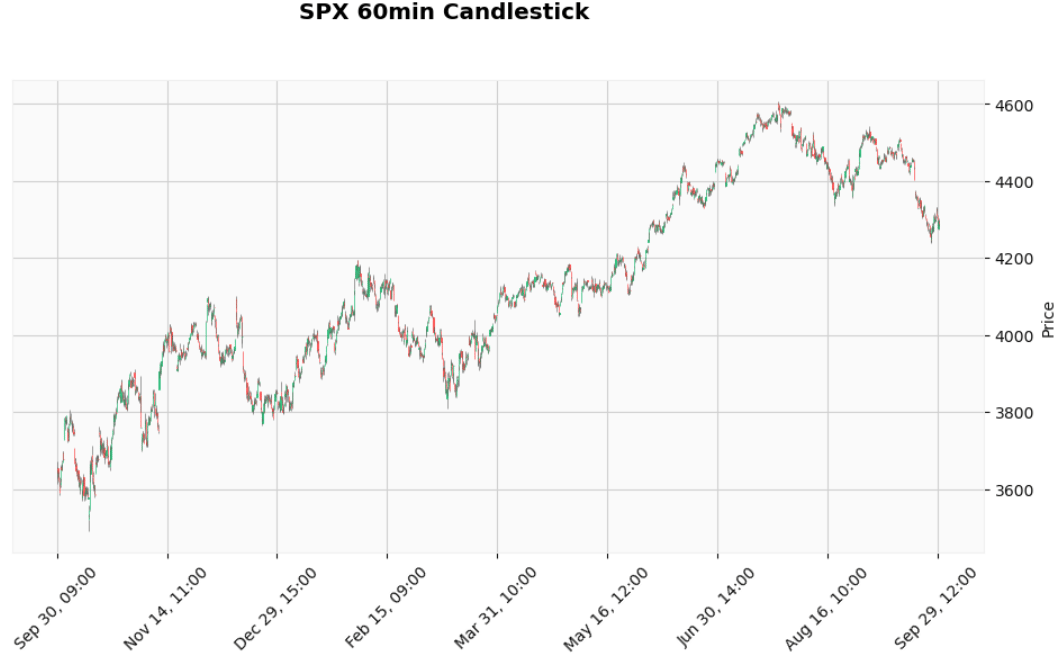

SPX (S&P 500)

plot_index_candles(df_spx, title="SPX 60min Candlestick")

SPX 60min Candlestick

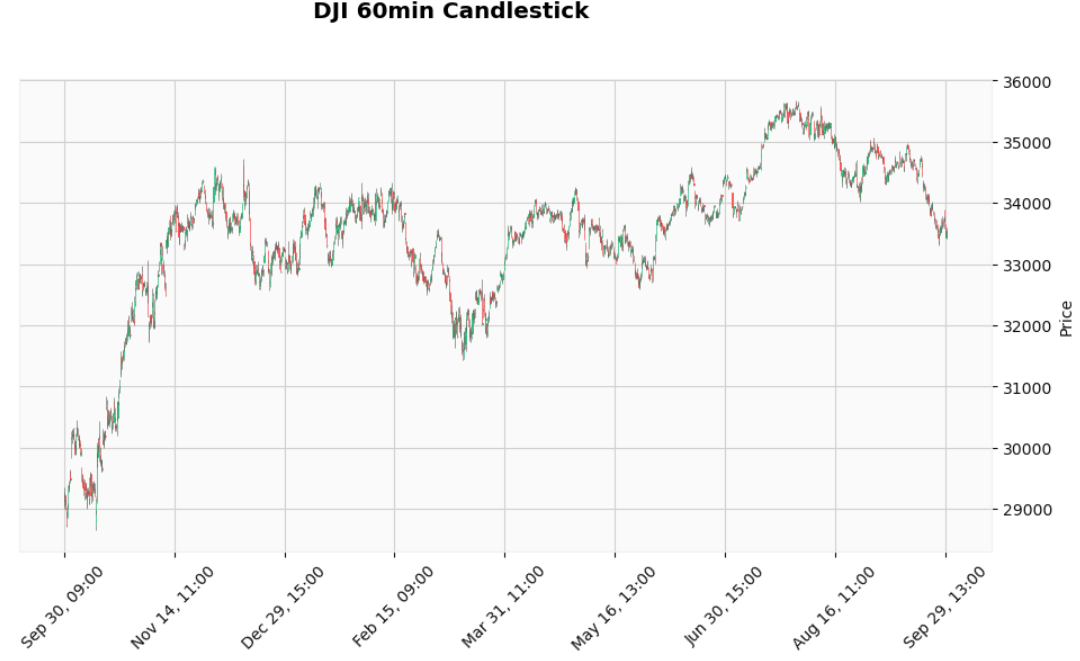

plot_index_candles(df_dji, title="DJI 60min Candlestick")

DJI 60min Candlestick

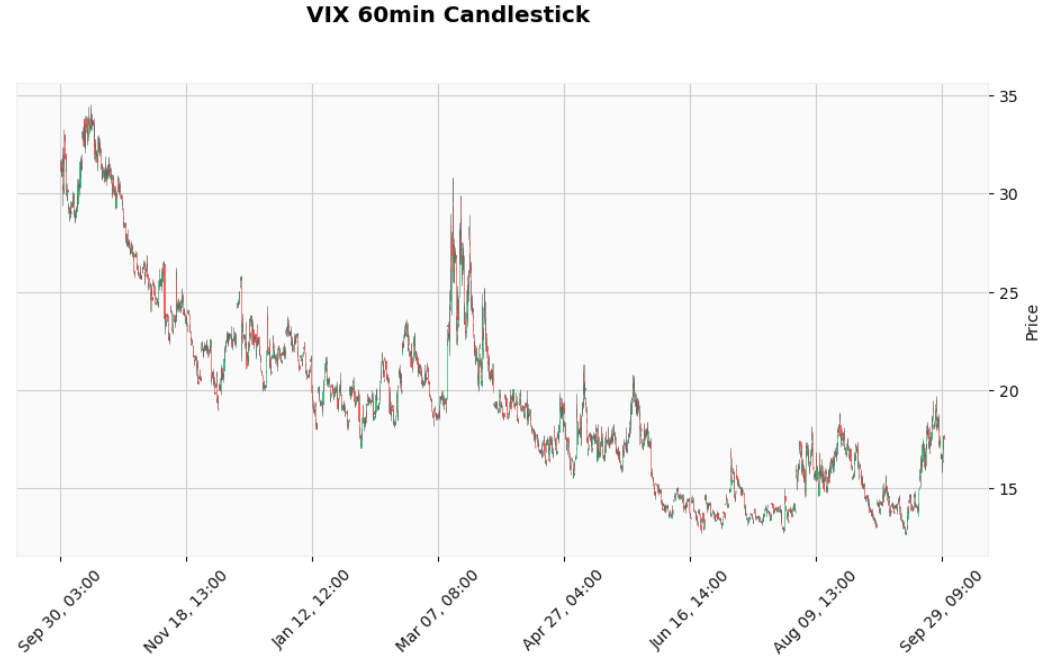

VIX (CBOE Volatility Index)

plot_index_candles(df_vix, title="VIX 60min Candlestick")

VIX 60min Candlestick

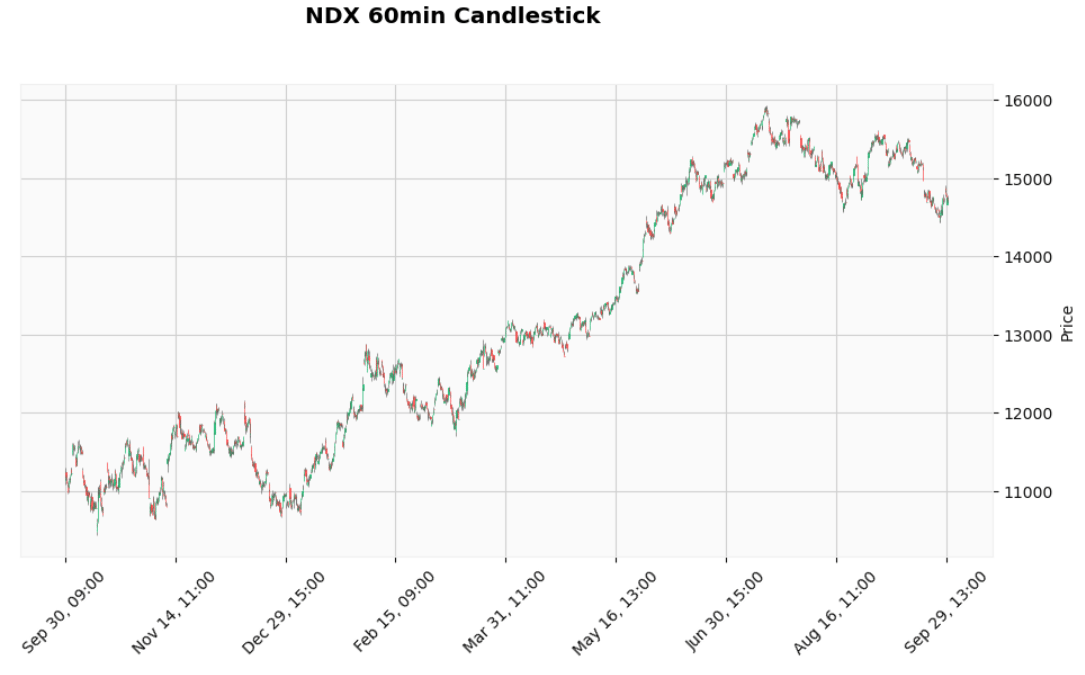

NDX (Nasdaq 100)

plot_index_candles(df_ndx, title="NDX 60min Candlestick")

NDX 60min Candlestick

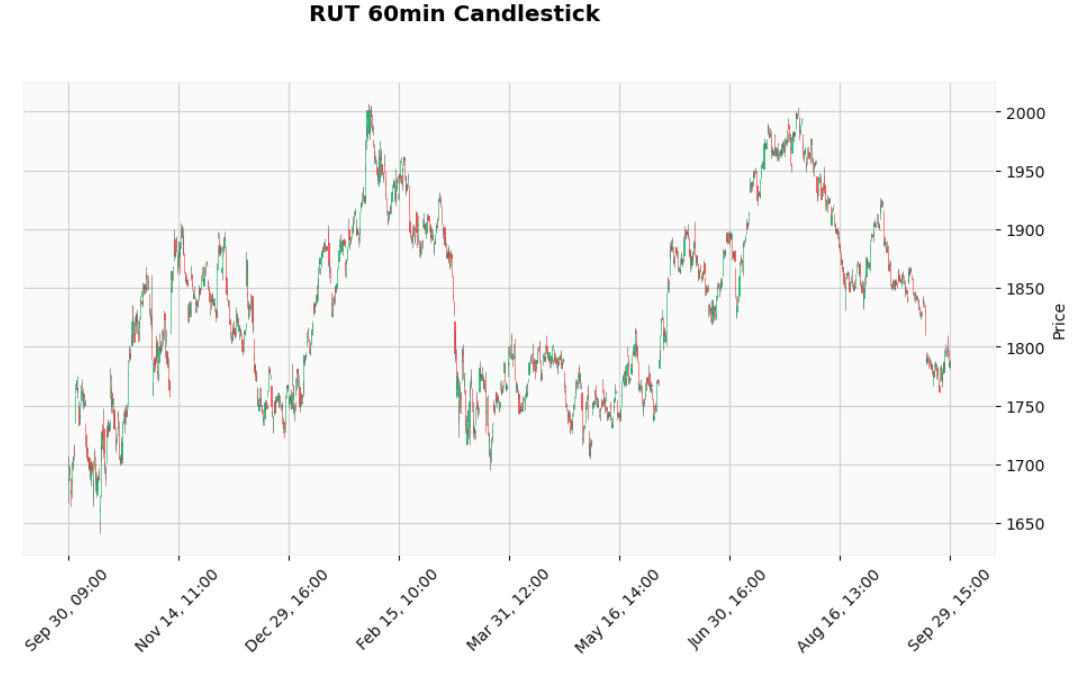

RUT (Russell 2000)

plot_index_candles(df_rut, title="RUT 60min Candlestick")

RUT 60min Candlestick

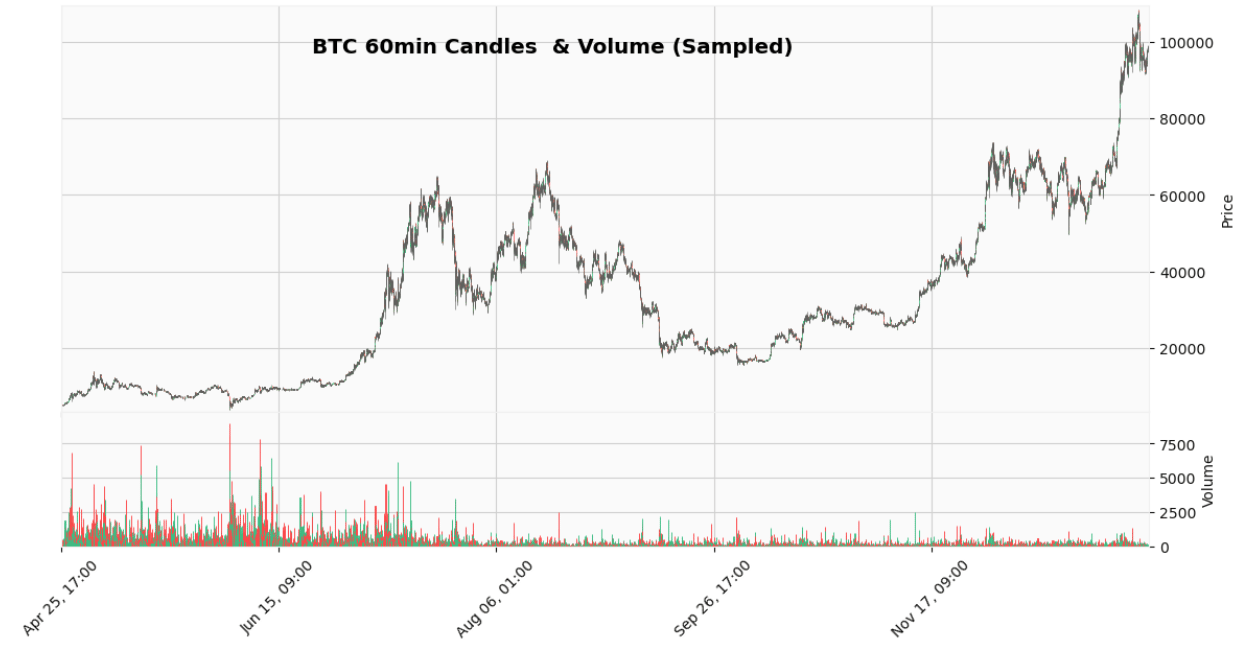

plot_crypto_candles(

df_btc,

sample_size=50_000,

resample_rule="60min",

title="BTC 60min Candles & Volume (Sampled)"

)

BTC 60min Candles & Volume (Sampled)

Remarks:

All FirstRate Data [1] are 1-minute intraday bars (format : timestamp, open, high, low, close, volume).

All datasets are in US Eastern Time (i.e., NY time).

Bars with zero volume are not included.

Volumes for stocks and ETFs are in units of shares.

The historical 1-min OHLC candle dataset from Bitstamp [2] contains 6846600 entries, ranging from 2012–01–01 10:01:00 to 2025–01–07 00:00:00.

Merton–Hawkes Monte Carlo Simulations

In this section, we present a compact implementation of an advanced stochastic volatility framework [3] that integrates Merton-style jump-diffusion modeling [4], Hawkes self-exciting processes [5], Monte Carlo simulation, and multi-asset analysis. It simulates future price paths for multiple assets by combining diffusive (normal) returns with jump shocks, where extreme moves cluster over time through a Hawkes process while ordinary market movements remain random.

The following function follows a four-stage pipeline: (1) data preparation, (2) statistical estimation, (3) Hawkes-driven stochastic simulation, and (4) aggregation/visualization:

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

def multi_asset_hawkes_simulation(

assets_data,

alpha=0.05,

beta=0.1,

num_paths=20,

threshold_multiplier=1.5,

dt=1/(6.5*60),

figsize=(14,8)

):

"""

Run Hawkes-driven jump-diffusion Monte Carlo simulation for multiple assets

and plot results.

Parameters

----------

assets_data : dict

Dictionary {asset_name: DataFrame} with 'close' column

alpha : float

Hawkes self-excitation parameter

beta : float

Hawkes decay parameter

num_paths : int

Number of Monte Carlo simulation paths

threshold_multiplier : float

Jump detection threshold (in sigma multiples)

dt : float

Time step

figsize : tuple

Plot size

Returns

-------

results : dict

Simulation results per asset

"""

results = {}

# --- Loop through assets ---

for asset_name, df in assets_data.items():

#Step 1: Data Preparation

df = df.copy()

# Drop unnecessary columns like volume

df = df.drop(columns=['volume'], errors='ignore')

# --- Compute log returns ---

df['log_return'] = np.log(df['close'] / df['close'].shift(1)) #Converts prices into log returns

df = df.dropna()

if df.empty or len(df) < 2:

print(f"Skipping {asset_name}: insufficient data")

continue

returns = df['log_return'].values

T = len(df)

S0 = df['close'].iloc[0]

# Step 2: Estimate Market Dynamics

#These represent the diffusion components

sigma = np.std(returns) #volatility

mu = np.mean(returns) #average drift

# Step 3: Jump Detection

threshold = threshold_multiplier * sigma

jump_mask = np.abs(returns - mu) > threshold

#This step separates normal market moves (diffusion) from extreme events (jumps) using a statistical filtering method that treats jumps as large deviations from typical behavior.

jump_returns = returns[jump_mask]

#Step 4: Jump Intensity (Baseline)

mu_lambda = len(jump_returns) / T

#This estimates average probability of a jump per time step

# --- Monte Carlo simulation ---

simulations = np.zeros((T, num_paths))

for path_idx in range(num_paths):

S = np.zeros(T)

lambda_t = np.zeros(T)

S[0] = S0

lambda_t[0] = mu_lambda

for t in range(1, T):

# Step 5: Hawkes intensity update

#This is a mean-reverting intensity process

# lambda_t increases after jumps & decays back to baseline

lambda_t[t] = lambda_t[t-1] + beta * (mu_lambda - lambda_t[t-1]) * dt

# Jump decision

jump = 0

#Step 6: Jump Occurrence

#A jump occurs with probability proportional to lambda_t

if np.random.rand() < lambda_t[t] * dt:

if len(jump_returns) > 0:

#Step 7: Jump Size

#Rather than assuming a theoretical distribution, we bootstrap actual historical jumps

jump = np.random.choice(jump_returns)

lambda_t[t] += alpha

#This creates self-excitation: A jump increases the probability of future jumps.

#Step 8: Diffusion Component

# Diffusion via bootstrap

# Instead of assuming Gaussian noise, we sample from empirical returns, capturing skewness, fat tails, and the true distribution of market movements.

r_boot = np.random.choice(returns)

#Step 9: Price Evolution

# Price update

S[t] = S[t-1] * np.exp(r_boot + jump)

#This is a discrete-time jump-diffusion model where the diffusion component is given by bootstrapped returns (r_boot) and the jump component by historical jumps

simulations[:, path_idx] = S

# --- Statistics ---

mean_sim = np.mean(simulations, axis=1)

lower_ci = np.percentile(simulations, 2.5, axis=1)

upper_ci = np.percentile(simulations, 97.5, axis=1)

results[asset_name] = {

'simulations': simulations,

'mean': mean_sim,

'lower_ci': lower_ci,

'upper_ci': upper_ci,

'actual': df['close'].values,

'index': df.index

}

# --- Plot ---

n_assets = len(results)

plt.figure(figsize=figsize)

for i, (asset_name, res) in enumerate(results.items(), 1):

plt.subplot(n_assets, 1, i)

plt.plot(res['index'], res['actual'], label=f'Actual {asset_name}', linewidth=2)

plt.plot(res['index'], res['mean'], label='Mean Simulation', linewidth=1.5)

plt.fill_between(

res['index'],

res['lower_ci'],

res['upper_ci'],

alpha=0.3,

label='95% CI'

)

plt.title(f'{asset_name} Hawkes Jump-Diffusion')

plt.ylabel('Price')

plt.legend(loc="upper left")

plt.xlabel('Timestamp')

plt.tight_layout()

plt.show()

return resultsTech Stocks

assets_data = {

'AAPL': df_aapl,

'AMZN': df_amzn,

'TSLA': df_tsla,

'META': df_meta,

'MSFT': df_msft

}

results = multi_asset_hawkes_simulation(assets_data)

Tech Stocks: Hawkes Jump-Diffusion Mean Simulation with 95% CI vs Actual Price

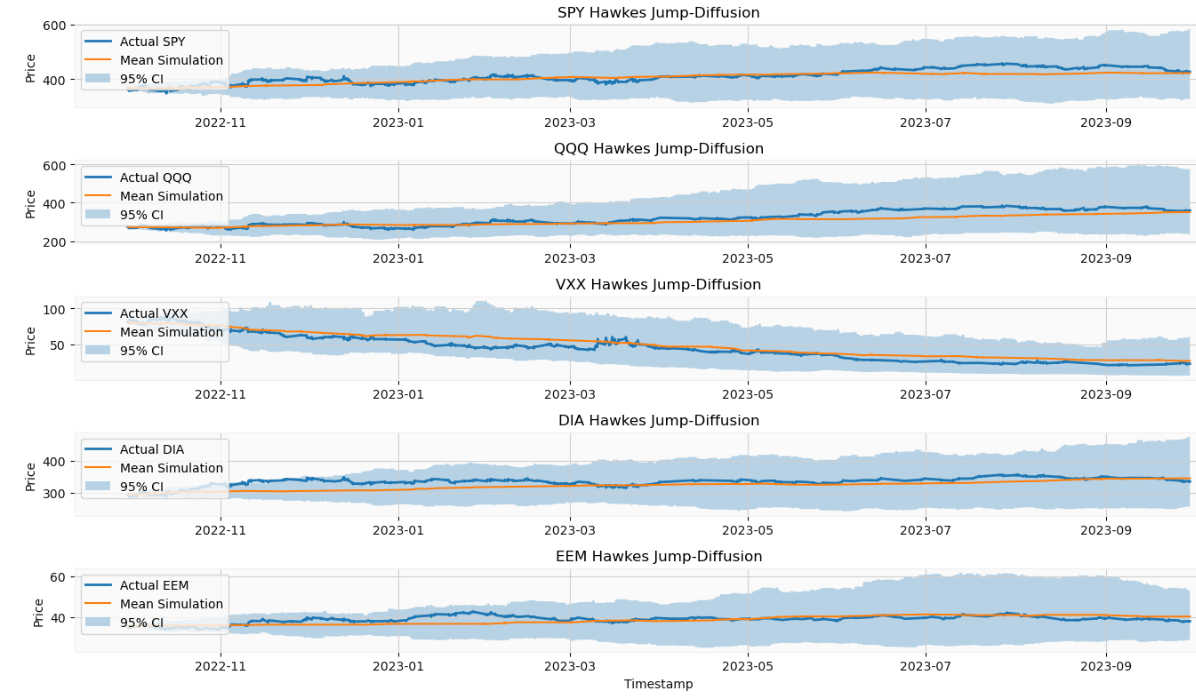

ETFs

assets_data = {

'SPY': df_spy,

'QQQ': df_qqq,

'VXX': df_vxx,

'DIA': df_dia,

'EEM': df_eem

}

results = multi_asset_hawkes_simulation(assets_data)

ETFs: Hawkes Jump-Diffusion Mean Simulation with 95% CI vs Actual Price

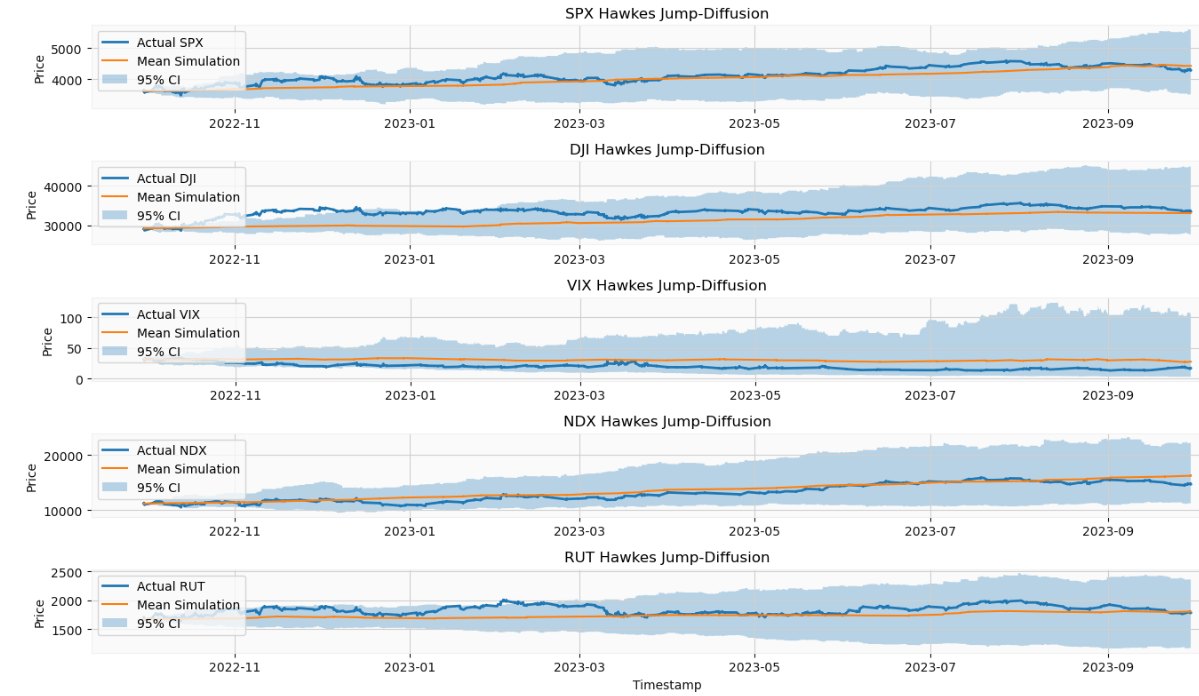

Indices

assets_data = {

'SPX': df_spx,

'DJI': df_dji,

'VIX': df_vix,

'NDX': df_ndx,

'RUT': df_rut

}

results = multi_asset_hawkes_simulation(assets_data)

Indices: Hawkes Jump-Diffusion Mean Simulation with 95% CI vs Actual Price

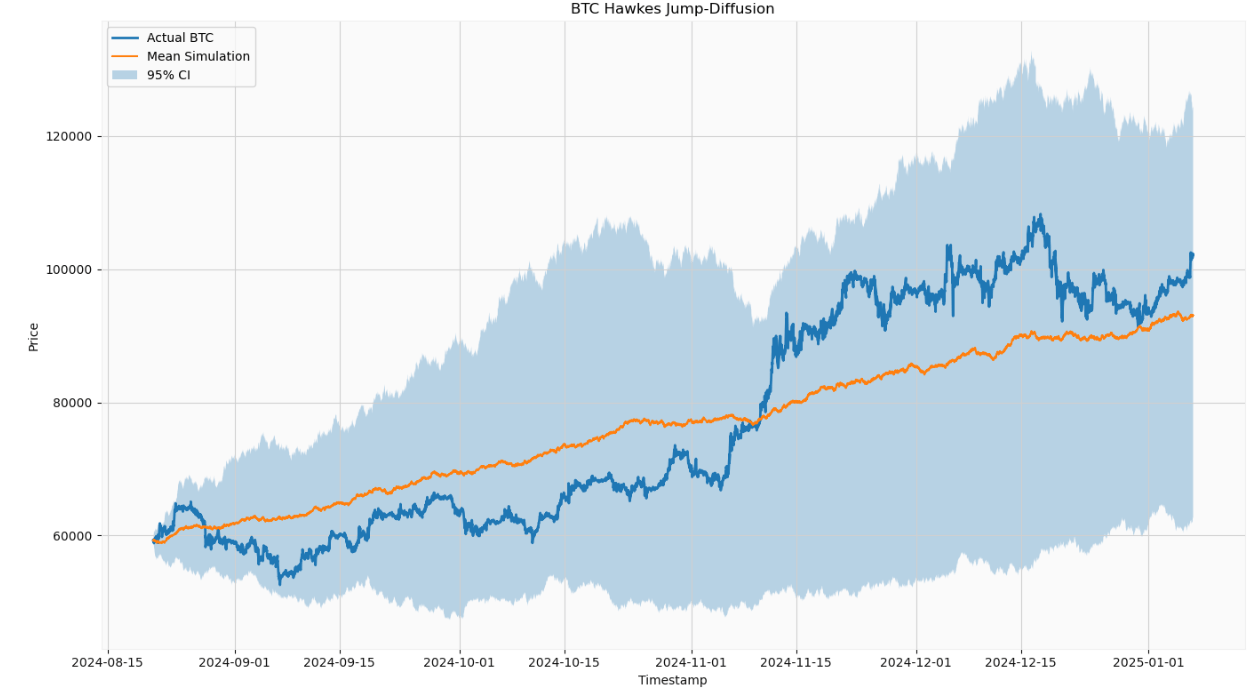

BTC-USD

# Keep the last 200,000 rows

df_btc_sample = df_btc.tail(200_000)

assets_data = {

'BTC': df_btc_sample,

}

results = multi_asset_hawkes_simulation(assets_data)

BTC-USD: Hawkes Jump-Diffusion Mean Simulation with 95% CI vs Actual Price

This function integrates three powerful concepts: (1) jump-diffusion for sudden large moves, (2) Hawkes processes for volatility clustering, and (3) bootstrap simulation to reflect the real market distribution.

Conclusions 🎯

We have implemented a Hawkes-driven jump-diffusion framework that combines return bootstrapping with self-exciting jump dynamics to capture volatility clustering across a multi-asset universe represented by stocks, ETFs, indices, and BTC.

The proposed approach has several key features:

Volatility is modeled using a combination of empirical diffusion using bootstrapped log returns and jump-driven components.

Self-exciting jumps are incorporated, meaning that the occurrence of one jump increases the likelihood of subsequent jumps.

Memory and autocorrelation are handled through the Hawkes intensity, capturing both volatility and jump clustering.

The model generates realistic price distributions with fat tails, clustered jumps, and stochastic paths via Monte Carlo simulation.

Its advantages include the ability to capture both jumps and clustering, and to produce probabilistic multi-path outputs.

However, it requires historical jump data and is more computationally intensive.

This framework is particularly suited for intraday multi-asset modeling, stress testing, market microstructure analysis, and Monte Carlo scenario generation.

Until next time! 👋🚀

Subscribe to our premium content to read the rest.

Become a paying subscriber to get access to this post and other subscriber-only content.

Upgrade