“AI is Going to Fundamentally Change…Everything”

That’s what NVIDIA CEO Jensen Huang just said about the AI boom, even calling it “the largest infrastructure buildout in human history.”

NVIDIA’s chips made this real-time revolution possible, but now it’s collaborating with Miso to unlock amazing new advances in robotics

Already a first-mover in the $1T fast-food industry, Miso’s AI-powered Flippy Fry Station robots have worked 200K+ hours for leading brands like White Castle, just surpassing 5M+ baskets of fried food.

And this latest NVIDIA collaboration unlocks up to 35% faster performance for Miso’s robots, which can cook perfect fried foods 24/7. In an industry experiencing 144% labor turnover, where speed is key, those gains can be game-changing.

There are 100K+ US fast-food locations in desperate need, a $4B/year revenue opportunity for Miso. And you can become an early-stage Miso shareholder today. Hurry to unlock up to 7% bonus stock.

This is a paid advertisement for Miso Robotics’ Regulation A offering. Please read the offering circular at invest.misorobotics.com.

🔔 Limited-Time Deal: 20% Off Our Complete 2026 Playbook! 🔔

Level up before the March ends!

AlgoEdge Insights: 30+ Python-Powered Trading Strategies – The Complete 2026 Playbook

30+ battle-tested algorithmic trading strategies from the AlgoEdge Insights newsletter – fully coded in Python, backtested, and ready to deploy. Your full arsenal for dominating 2026 markets.

Special Promo: Use code SPRING2026 for 20% off

Valid only until March 20, 2026 — act fast!

👇 Buy Now & Save 👇

Instant access to every strategy we've shared, plus exclusive extras.

— AlgoEdge Insights Team

🔔 Flash Launch Alert: AlgoEdge Colab Vault – Your 2026 Trading Edge! 🔔

The $79 book gives you ideas on paper.

This gives you 20 ready-to-run money machines in Google Colab.

I've turned my 20 must-have, battle-tested Python strategies into fully executable Google Colab notebooks – ready to run in your browser with one click.

One-click notebooks • Real-time data • Bias-free backtests • Interactive charts • OOS tests • CSV exports • Pro metrics

Test on any ticker instantly (SPY, BTC, PLTR, TSLA, etc.).

Launch Deal (ends Feb 28, 2026):

$129 one-time (save $40 – regular $169 after)

Lifetime access + free 2026 updates.

Inside the Vault (20 powerhouses):

Bias-Free Cubic Poly Trend

3-State HMM Volatility Filter

MACD-RSI Momentum

Bollinger Squeeze Breakout

Supertrend ATR Rider

Ichimoku Cloud

VWAP Scalper

Donchian Breakout

Keltner Reversion

RSI Divergence

MA Ribbon Filter

Kalman Adaptive Trend

ARIMA-GARCH Vol Forecast

LSTM Predictor

Random Forest Regime Classifier

Pairs Cointegration

Monte Carlo Simulator

FinBERT Sentiment

Straddle IV Crush

Fibonacci Retracement

👇 Grab It Before Price Jumps 👇

Buy Now – $129

P.S. Run code today → test live tomorrow → outperform the book readers.

Reply “VAULT” for direct link or questions.

Premium Members – Your Full Notebook Is Ready

The complete Google Colab notebook from today’s article (with live data, full Hidden Markov Model, interactive charts, statistics, and one-click CSV export) is waiting for you.

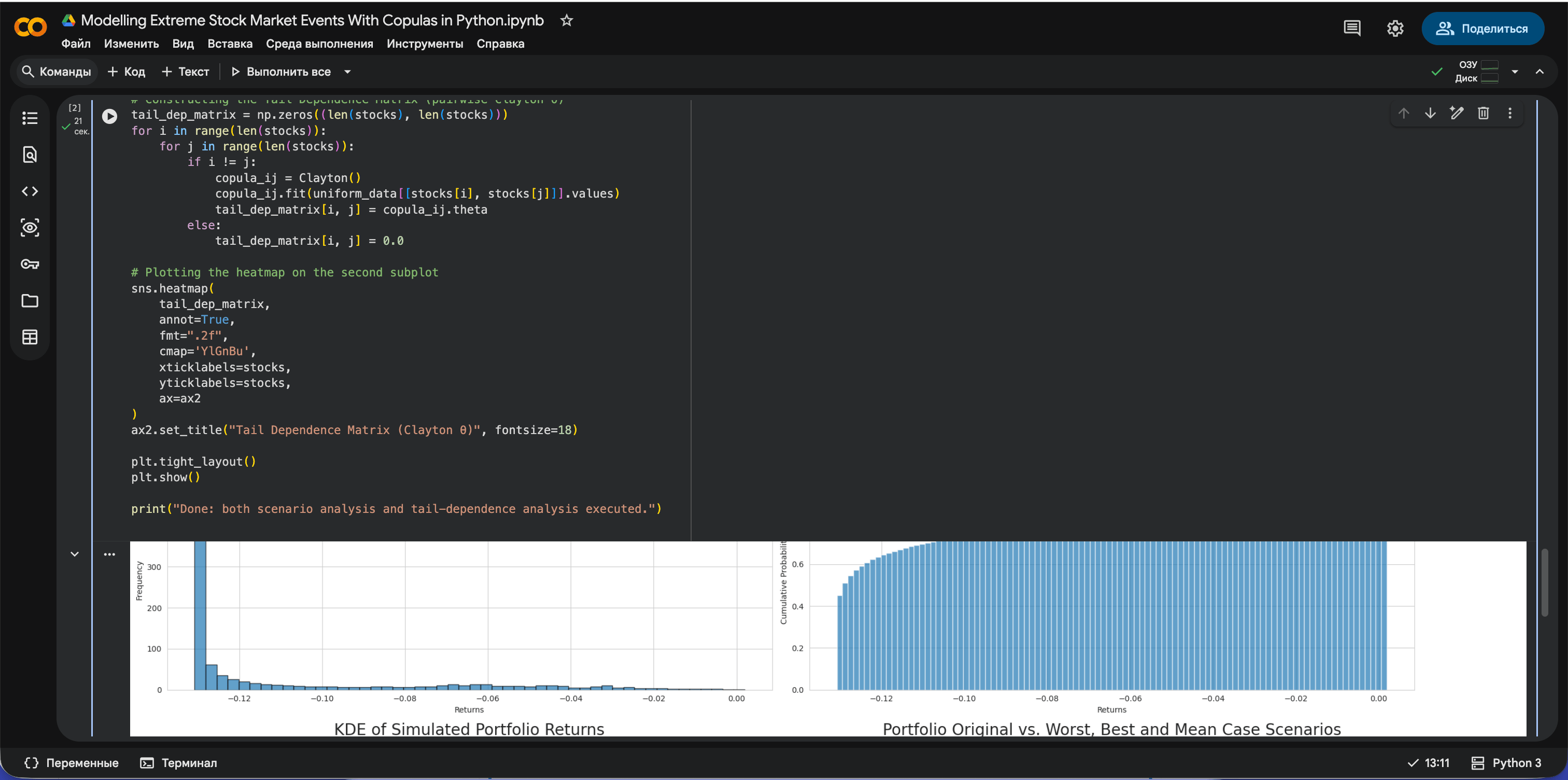

Preview of what you’ll get:

Inside:

Installation of all required Python libraries for copula-based analysis (yfinance, copulae, copulas, seaborn, etc.).

Download of real historical price data for S&P 500 and major tech stocks (MSFT, AAPL, GOOGL) and construction of daily returns.

Quantile transformation of returns into the [0,1][0,1] space to prepare data for copula modelling.

Fitting of bivariate Gaussian copulas between S&P 500 and each stock, and conditional simulation of stock returns given a specified S&P 500 drop (e.g., −5%).

Construction of simulated portfolio returns (equal-weight portfolio of MSFT, AAPL, GOOGL) under the stress scenario.

Four key visualizations for the portfolio scenario analysis:

Histogram of simulated portfolio returns.

Empirical CDF of simulated returns.

KDE (smoothed density) of simulated returns.

Time-series chart comparing original portfolio prices vs. worst‑case, best‑case, and mean scenario trajectories after the shock.

A second block that downloads a larger basket of assets (AAPL, MSFT, GOOGL, AMZN, NVDA, ^GSPC) and computes returns.

Transformation of these returns to uniforms via empirical CDF (rank-based) for tail-dependence analysis.

Rolling Clayton copula fitting on a 250‑day window to extract a time series of the lower-tail dependence parameter (θ) over time.

Computation of a pairwise tail-dependence matrix (Clayton θ for every pair of assets).

Two visual outputs for tail dependence:

Time-series plot of rolling tail dependence parameter θ.

Heatmap of the pairwise tail-dependence matrix across all stocks.

Free readers – you already got the full breakdown and visuals in the article. Paid members – you get the actual tool.

Not upgraded yet? Fix that in 10 seconds here👇

Google Collab Notebook With Full Code Is Available In the End Of The Article Behind The Paywall 👇 (For Paid Subs Only)

1. Introduction

Traditionally, we’ve leaned on correlation matrices for understanding inter-asset dynamics. Yet, as market crashes of the past have shown, when the storm hits, many models go haywire. Suddenly, correlations seem to converge to one, and diversification, the oft-touted risk management mantra, appears to offer little refuge.

This unexpected synchronization, where assets move in near-perfect harmony during downturns, has caused leading finance firms and institutional investors to increasingly turn their attention to more sophisticated tools to analyze the tail of asset distributions. This is where copulas come into play. These tools, moving beyond simplistic correlation, offer a nuanced understanding of asset dependencies, especially during those tumultuous times when markets plunge into chaos.

Collab notebook with all code snippets reproducing what is discussed in this article is available in the end of the post under the paywall (only for paid subs)

2. Understanding Copulas

The core of any risk management model lies in its capacity to grasp the intricate patterns and dependencies between various assets in a portfolio. The richer the understanding, the better equipped we are to handle market upheavals.

2.1. What are Copulas?

Copulas capture the dependence structure between random variables without altering their individual behaviors. They act as bridges, connecting the behaviors of assets, and allowing a deeper study of their joint movement. This understanding is critical for financial analysis and risk management. While standard correlation captures linear relationships, it can be blind to intricate dependencies in extreme market conditions. In times when assets show tail dependence, copulas provide a more comprehensive insight into these dynamics.

Solving the $100B Floral Industry’s Biggest Problem

In today’s floral industry, 60% of flowers are wasted before ever being sold. The Bouqs Co. uses proprietary tech to slash waste to <2% while getting flowers from farm-to-door 3x more efficiently. Now, they’re launching 70+ retail hubs to dominate the $18B U.S. market. Become a shareholder in The Bouqs Co.

Invest in The Bouqs Co.

This is a paid advertisement for The Bouq’s Regulation CF offering. Please read the offering circular at https://invest.bouqs.com/

2.2. The Mathematics Behind Copulas

Copulas stems from multivariate probability theory. Let’s dive into a basic mathematical understanding:

Definition: A copula: C:[0,1]^2 → [0,1] is a multivariate cumulative distribution function (CDF) whose one-dimensional margins are uniform on [0, 1], where U and V are random variables with uniform distributions on [0, 1]:

Defining the joint distribution of U and V using copulas.

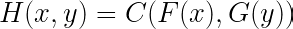

Sklar’s Theorem: Every multivariate distribution function can be expressed in terms of its margins and copula. Given a bivariate distribution function H(x,y) with margins F(x) and G(y), there exists a copula C(u,v) such that:

Expressing joint distribution H in terms of marginal distributions F and G using a copula.

This theorem underscores the beauty of copulas: the ability to separate the dependence structure from the margins. To put it simply, imagine you’re building a house. The margins are like the foundational pillars of the house, while the copula is the blueprint that dictates how these pillars are connected. No matter what materials (marginal distributions) you use for the pillars, the blueprint (copula) remains consistent in connecting them.

Fig 1. Visual Exploration of Gaussian Copulas: Transitioning from independent stock returns to a dependence structure defined by the Gaussian copula, alongside the dynamic evolution of their correlation. The histograms depict the distribution of stocks A and B throughout the transformation process.

Various types of copulas can be found in literature, and each serves its unique purpose in modelling dependencies:

Gaussian Copula: Perhaps the most well-known, the Gaussian copula captures linear dependencies between variables. It derives from the multivariate normal distribution. This copula is often favored for its mathematical simplicity but can underestimate tail dependencies.

Clayton Copula: This copula is asymmetrical and is particularly useful when modelling lower tail dependence, which means it’s well-suited for scenarios where joint extreme low values are of concern.

Gumbel Copula: The opposite of the Clayton copula, the Gumbel copula models upper tail dependence. It’s employed when the concern is about joint extreme high values.

Frank Copula: This copula does not capture tail dependencies but offers an intermediate dependence structure. It’s useful in scenarios where the tails of distributions are not of primary concern.

3. Python Implementation

3.1 Scenario Modelling with Copulas using Python

Scenario modelling in finance simulates potential future outcomes based on assumptions, aiding in understanding investment sensitivities to market shifts. The provided code examines how a hypothetical portfolio might react to a significant S&P 500 drop.

Portfolio Definition and Data Retrieval

We build a hypothetical portfolio consisting of three major tech stocks and retrieve the data yfinance :

portfolio_tickers = ["MSFT", "AAPL", "GOOGL"]

end_date = "2022-01-01"

start_date = "2020-01-01"

symbols = ["^GSPC"] + portfolio_tickers

data = yf.download(symbols, start=start_date, end=end_date)["Adj Close"]Quantile Transformation for Copula Preparation

To ensure that the data is suitable for copula analysis, it needs to lie within a [0,1] range. Quantile transformation is applied, which essentially ranks the data in a uniform distribution.

for symbol in returns.columns:

qt = QuantileTransformer(output_distribution='uniform')

data_uniform[symbol] = qt.fit_transform(returns[[symbol]]).flatten()

quantile_transformers[symbol] = qtConditional Sampling Using Copulas

Given that our primary interest is to understand how a certain drop in the S&P 500 would affect our portfolio stocks, we need to establish a joint behavior between the S&P 500 and each stock. This relationship is captured using a bivariate Gaussian copula.

Using the copula, a conditional distribution is derived, which tells us about the potential behavior of our stocks’ returns, given a certain drop in the S&P 500. This is achieved via the conditional_sample function.

def conditional_sample(u1, rho, n_samples=1000):

u2 = np.linspace(0.001, 0.999, n_samples)

return u2, norm.cdf((norm.ppf(u1) - rho * norm.ppf(u2)) / np.sqrt(1 - rho**2))Learn how to code faster with AI in 5 mins a day

You're spending 40 hours a week writing code that AI could do in 10.

While you're grinding through pull requests, 200k+ engineers at OpenAI, Google & Meta are using AI to ship faster.

How?

The Code newsletter teaches them exactly which AI tools to use and how to use them.

Here's what you get:

AI coding techniques used by top engineers at top companies in just 5 mins a day

Tools and workflows that cut your coding time in half

Tech insights that keep you 6 months ahead

Sign up and get access to the Ultimate Claude code guide to ship 5X faster.

Simulation & Scenario Analysis

For each of the portfolio’s stocks, given the drop in the S&P 500 (specified as a percentage), the copula model is used to simulate potential stock returns. These simulated returns provide insights into probable worst-case, best-case, and mean scenarios for each stock.

results = {}

for ticker in portfolio_tickers:

index_drop = quantile_transformers["^GSPC"].transform(np.array([[market_drop_percentage]]))[0][0]

# Bivariate assumption with ^GSPC and each stock

bi_data = data_uniform[["^GSPC", ticker]]

bi_copula = GaussianCopula(dim=2)

bi_copula.fit(bi_data.values)

rho = bi_copula.params[0]

conditional_u2, conditional_cdf = conditional_sample(index_drop, rho)

conditional_returns = quantile_transformers[ticker].inverse_transform(conditional_cdf.reshape(-1, 1)).flatten()

results[ticker] = conditional_returnsComplete Code

Putting all the above together, we get the following Python code:

import numpy as np

import pandas as pd

import yfinance as yf

import seaborn as sns

from sklearn.preprocessing import QuantileTransformer

from scipy.stats import norm

import matplotlib.pyplot as plt

from copulae import GaussianCopula

import warnings; warnings.filterwarnings('ignore')

# Define the portfolio

portfolio_tickers = ["MSFT", "AAPL", "GOOGL"]

# 1. Fetch real data

end_date = "2022-01-01"

start_date = "2020-01-01"

symbols = ["^GSPC"] + portfolio_tickers

data = yf.download(symbols, start=start_date, end=end_date)["Adj Close"]

market_drop_percentage = -0.05

# 2. Compute daily returns

returns = data.pct_change().dropna()

# 3. Transform returns to [0,1] range using quantile transformation

quantile_transformers = {}

data_uniform = pd.DataFrame()

for symbol in returns.columns:

qt = QuantileTransformer(output_distribution='uniform')

data_uniform[symbol] = qt.fit_transform(returns[[symbol]]).flatten()

quantile_transformers[symbol] = qt

# Define the function for conditional sampling

def conditional_sample(u1, rho, n_samples=1000):

u2 = np.linspace(0.001, 0.999, n_samples)

return u2, norm.cdf((norm.ppf(u1) - rho * norm.ppf(u2)) / np.sqrt(1 - rho**2))

# Simulation for each stock in the portfolio

results = {}

for ticker in portfolio_tickers:

index_drop = quantile_transformers["^GSPC"].transform(np.array([[market_drop_percentage]]))[0][0]

# Bivariate assumption with ^GSPC and each stock

bi_data = data_uniform[["^GSPC", ticker]]

bi_copula = GaussianCopula(dim=2)

bi_copula.fit(bi_data.values)

rho = bi_copula.params[0]

conditional_u2, conditional_cdf = conditional_sample(index_drop, rho)

conditional_returns = quantile_transformers[ticker].inverse_transform(conditional_cdf.reshape(-1, 1)).flatten()

results[ticker] = conditional_returns

'''

# Visualization for each stock in the portfolio

# Comment it out to see the impact to each stock in portfolio

for ticker, conditional_returns in results.items():

fig, ax = plt.subplots(2, 2, figsize=(25, 10))

last_known_price = data[ticker].iloc[-1]

min_return = np.min(conditional_returns)

max_return = np.max(conditional_returns)

mean_return = np.mean(conditional_returns)

final_min_price = last_known_price * (1 + min_return)

final_max_price = last_known_price * (1 + max_return)

final_mean_price = last_known_price * (1 + mean_return)

simulated_dates = pd.date_range(start=data.index[-1], periods=31, freq='D')[1:]

min_price_trajectory = [last_known_price] + [final_min_price] * (len(simulated_dates) - 1)

max_price_trajectory = [last_known_price] + [final_max_price] * (len(simulated_dates) - 1)

mean_price_trajectory = [last_known_price] + [final_mean_price] * (len(simulated_dates) - 1)

# Plot 1: Histogram of Simulated Returns

ax[0, 0].hist(conditional_returns, bins=50, edgecolor='k', alpha=0.7)

ax[0, 0].set_title(f"Simulated {ticker} Returns given 10% drop in S&P 500")

ax[0, 0].set_xlabel("Returns")

ax[0, 0].set_ylabel("Frequency")

# Plot 2: CDF of Simulated Returns

ax[0, 1].hist(conditional_returns, bins=100, density=True, cumulative=True, alpha=0.7)

ax[0, 1].set_title('CDF of Simulated Returns')

# Plot 3: KDE of Simulated Returns

sns.kdeplot(conditional_returns, shade=True, ax=ax[1, 0])

ax[1, 0].set_title('KDE of Simulated Returns')

# Plot 4: Ticker Original vs. Worst, Best, and Mean Case Scenarios

data[ticker].plot(ax=ax[1, 1], label="Original Prices")

pd.Series(min_price_trajectory, index=simulated_dates).plot(ax=ax[1, 1], label="Worst-Case Scenario", linestyle='--', color="red")

pd.Series(max_price_trajectory, index=simulated_dates).plot(ax=ax[1, 1], label="Best-Case Scenario", linestyle='--', color="green")

pd.Series(mean_price_trajectory, index=simulated_dates).plot(ax=ax[1, 1], label="Mean Scenario", linestyle='--', color="blue")

label_x_position = simulated_dates[-10]

ax[1, 1].annotate(f"{min_return*100:.2f}% (Worst Scenario)", (label_x_position, final_min_price * 0.98), fontsize=12, ha="left", color="red")

ax[1, 1].annotate(f"{max_return*100:.2f}% (Best Scenario)", (label_x_position, final_max_price * 1.02), fontsize=12, ha="left", color="green")

ax[1, 1].annotate(f"{mean_return*100:.2f}% (Mean Scenario)", (label_x_position, final_mean_price), fontsize=12, ha="left", color="blue")

ax[1, 1].set_title(f"{ticker} Original vs. Worst, Best and Mean Case Scenarios")

ax[1, 1].legend()

plt.tight_layout()

plt.show()

'''

# Compute portfolio returns from individual stock returns

portfolio_returns = np.mean(np.array([results[ticker] for ticker in portfolio_tickers]), axis=0)

# Visualization for the Portfolio

fig, ax = plt.subplots(2, 2, figsize=(25, 10))

last_known_prices = data[portfolio_tickers].iloc[-1]

portfolio_last_known_price = np.mean(last_known_prices) # Equally weighted

min_return = np.min(portfolio_returns)

max_return = np.max(portfolio_returns)

mean_return = np.mean(portfolio_returns)

final_min_price = portfolio_last_known_price * (1 + min_return)

final_max_price = portfolio_last_known_price * (1 + max_return)

final_mean_price = portfolio_last_known_price * (1 + mean_return)

simulated_dates = pd.date_range(start=data.index[-1], periods=31, freq='D')[1:]

min_price_trajectory = [portfolio_last_known_price] + [final_min_price] * (len(simulated_dates) - 1)

max_price_trajectory = [portfolio_last_known_price] + [final_max_price] * (len(simulated_dates) - 1)

mean_price_trajectory = [portfolio_last_known_price] + [final_mean_price] * (len(simulated_dates) - 1)

# Plot 1: Histogram of Simulated Returns

ax[0, 0].hist(portfolio_returns, bins=50, edgecolor='k', alpha=0.7)

ax[0, 0].set_title(f"Simulated Portfolio Returns given {market_drop_percentage*100:.2f}% drop in S&P 500", fontsize=25)

ax[0, 0].set_xlabel("Returns")

ax[0, 0].set_ylabel("Frequency")

# Plot 2: CDF of Simulated Returns

ax[0, 1].hist(portfolio_returns, bins=100, density=True, cumulative=True, alpha=0.7)

ax[0, 1].set_title('CDF of Simulated Portfolio Returns', fontsize=25)

# Plot 3: KDE of Simulated Returns

sns.kdeplot(portfolio_returns, shade=True, ax=ax[1, 0])

ax[1, 0].set_title('KDE of Simulated Portfolio Returns', fontsize=25)

# Plot 4: Portfolio Original vs. Worst, Best, and Mean Case Scenarios

portfolio_prices = data[portfolio_tickers].mean(axis=1)

portfolio_prices.plot(ax=ax[1, 1], label="Original Prices")

pd.Series(min_price_trajectory, index=simulated_dates).plot(ax=ax[1, 1], label="Worst-Case Scenario", linestyle='--', color="red")

pd.Series(max_price_trajectory, index=simulated_dates).plot(ax=ax[1, 1], label="Best-Case Scenario", linestyle='--', color="green")

pd.Series(mean_price_trajectory, index=simulated_dates).plot(ax=ax[1, 1], label="Mean Scenario", linestyle='--', color="blue")

label_x_position = simulated_dates[-10]

ax[1, 1].annotate(f"{min_return*100:.2f}% (Worst Scenario)", (label_x_position, final_min_price * 0.98), fontsize=16, ha="left", color="red")

ax[1, 1].annotate(f"{max_return*100:.2f}% (Best Scenario)", (label_x_position, final_max_price * 1.02), fontsize=16, ha="left", color="green")

ax[1, 1].annotate(f"{mean_return*100:.2f}% (Mean Scenario)", (label_x_position, final_mean_price), fontsize=16, ha="left", color="blue")

ax[1, 1].set_title(f"Portfolio Original vs. Worst, Best, and Mean Case Scenarios", fontsize=25)

ax[1, 1].legend()

# General configurations for the plot aesthetics

sns.set_style("whitegrid")

plt.tight_layout()

plt.show()

Fig 2. Scenario Analysis of Portfolio Returns: The visual representation depicts the simulated portfolio returns based on a given 5% immediate drop in the S&P 500. The four subplots highlight the Portfolio’s distribution, cumulative distribution, kernel density estimation of the returns, and a comparative trajectory of original prices against the worst-case, best-case, and mean scenarios over a forecasted period.

Histogram: This showcases the distribution of the potential portfolio returns after the market shock. It allows the analyst to quickly identify the range and frequency of these returns.

Cumulative Distribution Function (CDF): This provides a cumulative perspective on the returns, helping identify the probability that the returns will fall within a certain range. For instance, if one wanted to see the worst 10% of outcomes, they’d look at the 10th percentile on the CDF.

Kernel Density Estimation (KDE): A smoothed version of the histogram, this offers a clearer view of the distribution’s shape, helping highlight modes and other features that might not be as apparent in a histogram.

Comparative Trajectory: This plots the forecasted portfolio value trajectory over a specified period, comparing the original trajectory with potential best-case, worst-case, and mean scenarios after the shock.

The visualization helps in understanding the potential downside (and upside) risks the portfolio might face in the event of a significant market downturn. By simulating thousands of scenarios, the visualization captures a broad range of potential outcomes, offering insights into the possible volatility and fluctuations of portfolio value.

3.2 Tail Dependence with Copulas in Python

It’s pivotal to deepen our understanding of the underlying relationships between assets in our portfolio. Scenario analysis allowed us to perceive the potential impacts of various hypothetical market events. However, to comprehend the systemic risks associated with our portfolio, we must look beyond individual stock behaviors and evaluate their joint behavior, especially during extreme market conditions.

Data Preprocessing:

The

to_uniformfunction in the code below converts the returns data to a uniform distribution using the empirical cumulative distribution function (ECDF).

Rolling Time Window Analysis:

A rolling window analysis is performed on the data with a window size of 250 days.

Within each window, the Clayton copula is fitted to the data to capture the dependence structure between the assets.

The tail dependence parameter (theta for Clayton) is extracted for each window, giving a time series of tail dependencies.

window_size = 250

for start in range(0, len(uniform_data) - window_size):

...

copula.fit(window_data.values)

tail_parameters.append(copula.theta)Complete Code

Putting all the above together, we get the following Python code:

import yfinance as yf

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

from scipy.stats import rankdata

from copulas.bivariate import Clayton

import warnings; warnings.filterwarnings('ignore')

# 1. Data Collection:

# Definestock basket

stocks = ["AAPL", "MSFT", "GOOGL", "AMZN", "NVDA", "^GSPC"]

# Fetch data

data = yf.download(stocks, start="2000-01-01", end="2023-01-01")['Adj Close']

# 2. Data Preprocessing:

# Compute daily returns and drop NaN values

returns = data.pct_change().dropna()

# Convert data to uniform using ECDF

def to_uniform(column):

n = len(column)

return rankdata(column) / (n + 1)

uniform_data = returns.apply(to_uniform)

# 3. Rolling Time Window Analysis:

# Rolling window analysis

window_size = 250

tail_parameters = []

for start in range(0, len(uniform_data) - window_size):

window_data = uniform_data.iloc[start:start + window_size]

copula = Clayton()

copula.fit(window_data.values)

# Extract tail parameter (for Clayton, the theta parameter)

tail_parameters.append(copula.theta)

# 4. Visualization:

fig, (ax1, ax2) = plt.subplots(1, 2, figsize=(20, 5)) # 1 row, 2 columns

# Plotting the Tail Dependence Over Time on the first subplot

ax1.plot(returns.index[window_size:], tail_parameters)

ax1.set_title('Tail Dependence Over Time',fontsize=25)

ax1.set_ylabel('Tail Dependence Parameter')

# Constructing the Tail Dependence Matrix

tail_dep_matrix = np.zeros((len(stocks), len(stocks)))

for i in range(len(stocks)):

for j in range(len(stocks)):

if i != j:

copula_ij = Clayton()

copula_ij.fit(uniform_data[[stocks[i], stocks[j]]].values)

tail_dep_matrix[i, j] = copula_ij.theta

# Plotting the heatmap on the second subplot

sns.heatmap(tail_dep_matrix, annot=True, cmap='YlGnBu', xticklabels=stocks, yticklabels=stocks, ax=ax2)

ax2.set_title("Tail Dependence Matrix", fontsize=25)

plt.tight_layout()

plt.show()

Fig 3. Visual Analysis of Tail Dependence among Selected Stocks: The left graph reveals the rolling tail dependence over time, highlighting periods with heightened joint extreme behavior. The right heatmap provides a pairwise tail dependence matrix, offering insights into which pairs of assets exhibit stronger extreme co-movements. Particularly, the intensity of colors in the heatmap denotes the strength of tail dependence between asset pairs, with darker shades indicating stronger dependence

4. The Power of Copulas in Financial Analysis

4.1 Why copulas offer a superior approach for scenario modelling

Copulas stand out because they allow for the examination of individual asset behaviors (through their marginals) and their interrelationships (through the copula itself). This duality makes them perfect for scenario modelling, capturing nuanced relationships beyond mere correlation.

4.2 Insights derived from applying copulas and tail dependencies

From our analysis, observing the evolution of tail dependencies over time can provide crucial insights into how the dependency structure shifts during market events. A rising tail dependence parameter, for instance, might indicate increasing concurrence of extreme losses in assets, signaling a red flag for diversified portfolios.

4.3 Real-world implications of using copulas

For portfolio managers, understanding tail dependencies means better risk management. If two assets tend to suffer extreme losses together, diversifying across them may offer limited risk reduction. Copulas, thus, can guide better asset allocation, hedging strategies, and stress-testing exercises.

5. Limitations and Criticisms of Using Copulas

While copulas offer robust tools for financial modelling, they aren’t without challenges:

Misspecification Risk: The choice of copula matters. Using an incorrect copula can lead to misleading results.

Static Nature: While rolling windows capture evolving dependencies, copulas themselves don’t inherently model changing relationships over time.

Data Sensitivity: Small changes in input data might lead to significant shifts in the copula parameter estimates.

Given these pitfalls, regular validation is essential. Empirical checks, backtesting, and other validation techniques should be an integral part of any modelling exercise to ensure the chosen copula accurately captures the dependencies.

6. Conclusion

Copulas bring a nuanced understanding of asset relationships, especially during extreme market scenarios. Recognizing the tail risks, portfolio managers can make more informed decisions, optimizing not just for returns but also for robustness during market downturns. As with any tool, though, the user must be wary of its limitations. Regular validation, awareness of potential pitfalls, and a constant pursuit of knowledge remain key.

Subscribe to our premium content to read the rest.

Become a paying subscriber to get access to this post and other subscriber-only content.

Upgrade