When Is the Right Time to Retire?

Determining when to retire is one of life’s biggest decisions, and the right time depends on your personal vision for the future. Have you considered what your retirement will look like, how long your money needs to last and what your expenses will be? Answering these questions is the first step toward building a successful retirement plan.

Our guide, When to Retire: A Quick and Easy Planning Guide, walks you through these critical steps. Learn ways to define your goals and align your investment strategy to meet them. If you have $1,000,000 or more saved, download your free guide to start planning for the retirement you’ve worked for.

🔔 Limited-Time Holiday Deal: 20% Off Our Complete 2026 Playbook! 🔔

Level up before the year ends!

AlgoEdge Insights: 30+ Python-Powered Trading Strategies – The Complete 2026 Playbook

30+ battle-tested algorithmic trading strategies from the AlgoEdge Insights newsletter – fully coded in Python, backtested, and ready to deploy. Your full arsenal for dominating 2026 markets.

Special Promo: Use code DECEMBER2025 for 20% off

Valid only until February 20, 2026 — act fast!

👇 Buy Now & Save 👇

Instant access to every strategy we've shared, plus exclusive extras.

— AlgoEdge Insights Team

🔔 Flash Launch Alert: AlgoEdge Colab Vault – Your 2026 Trading Edge! 🔔

The $79 book gives you ideas on paper.

This gives you 20 ready-to-run money machines in Google Colab.

I've turned my 20 must-have, battle-tested Python strategies into fully executable Google Colab notebooks – ready to run in your browser with one click.

One-click notebooks • Real-time data • Bias-free backtests • Interactive charts • OOS tests • CSV exports • Pro metrics

Test on any ticker instantly (SPY, BTC, PLTR, TSLA, etc.).

Launch Deal (ends Feb 28, 2026):

$129 one-time (save $40 – regular $169 after)

Lifetime access + free 2026 updates.

Inside the Vault (20 powerhouses):

Bias-Free Cubic Poly Trend

3-State HMM Volatility Filter

MACD-RSI Momentum

Bollinger Squeeze Breakout

Supertrend ATR Rider

Ichimoku Cloud

VWAP Scalper

Donchian Breakout

Keltner Reversion

RSI Divergence

MA Ribbon Filter

Kalman Adaptive Trend

ARIMA-GARCH Vol Forecast

LSTM Predictor

Random Forest Regime Classifier

Pairs Cointegration

Monte Carlo Simulator

FinBERT Sentiment

Straddle IV Crush

Fibonacci Retracement

👇 Grab It Before Price Jumps 👇

Buy Now – $129

P.S. Run code today → test live tomorrow → outperform the book readers.

Reply “VAULT” for direct link or questions.

Premium Members – Your Full Notebook Is Ready

The complete Google Colab notebook from today’s article (with live data, full Hidden Markov Model, interactive charts, statistics, and one-click CSV export) is waiting for you.

Preview of what you’ll get:

Inside:

Automatic PLTR (or any ticker) daily data download from 2021 → today via free yfinance

Full polynomial regression demo (degrees 1–4) with R², MAE, RMSE, MAPE metrics & beautiful comparison plots

Bias-free expanding-window cubic (deg=3) regression – no lookahead bias, real walk-forward style

Clear trading signals: long when price > cubic fit and slope > 0, with entry/exit markers

Interactive-style plots: price + trend filter + green/red signals + in-position highlights

Complete backtest vs Buy & Hold: daily/ cumulative returns, 0.1% transaction costs applied

Detailed performance metrics: Total Return, CAGR, Ann. Volatility, Sharpe (rf=0), Max Drawdown

Trade statistics: number of trades, win rate, average trade return

Realistic Out-of-Sample (OOS) test on last ~10% of data + separate metrics & chart

Bonus: change one line to test any symbol (e.g. TSLA, BTC-USD, AAPL, GLD) or adjust degree/window/costs

Free readers – you already got the full breakdown and visuals in the article. Paid members – you get the actual tool.

Not upgraded yet? Fix that in 10 seconds here👇

Google Collab Notebook With Full Code Is Available In the End Of The Article Behind The Paywall 👇 (For Paid Subs Only)

I really liked a comparison generated by ChatGPT: Financial markets are rarely calm seas — they surge, crash, and ripple with waves of uncertainty. While this may be overly poetic, it conveys an important message. One thing that will always be present in the markets is volatility.

And although asset prices themselves may seem unpredictable, something that might be easier to forecast is volatility— or more precisely, volatility clusters. In simpler terms, these clusters refer to periods of high (or low) volatility that are often followed by more of the same. Capturing this behavior is crucial for traders, risk managers, and policymakers.

In this article, we will explore one of the approaches to forecasting time-varying volatility: the popular ARCH model.

Here’s an un-boring way to invest that billionaires have quietly leveraged for decades

If you have enough money that you think about buckets for your capital…

Ever invest in something you know will have low returns—just for the sake of diversifying?

CDs… Bonds… REITs… :(

Sure, these “boring” investments have some merits. But you probably overlooked one historically exclusive asset class:

It’s been famously leveraged by billionaires like Bezos and Gates, but just never been widely accessible until now.

It outpaced the S&P 500 (!) overall WITH low correlation to stocks, 1995 to 2025.*

It’s not private equity or real estate. Surprisingly, it’s postwar and contemporary art.

And since 2019, over 70,000 people have started investing in SHARES of artworks featuring legends like Banksy, Basquiat, and Picasso through a platform called Masterworks.

23 exits to date

$1,245,000,000+ invested

Annualized net returns like 17.6%, 17.8%, and 21.5%

My subscribers can SKIP their waitlist and invest in blue-chip art.

Investing involves risk. Past performance not indicative of future returns. Reg A disclosures at masterworks.com/cd

Volatility 101

Before we talk about forecasting volatility, it makes sense to first understand what volatility actually is. Volatility refers to the degree of variation in asset prices over time and is usually measured as the standard deviation or variance of returns.

Essentially, it tells us how much risk or uncertainty is associated with the future price of an asset:

High volatility implies that returns fluctuate significantly, indicating a riskier asset.

Low volatility implies that returns are more stable, suggesting a less risky asset.

That’s almost it for the basic introduction. One last thing to keep in mind is that volatility is not static — it changes over time in patterns that we can try to model and forecast.

Image generated with ChatGPT

Why Forecast Volatility?

Now that we know what volatility is, it should be quite clear why we’d want to forecast it. Some of the key use cases for volatility forecasting include:

Risk management — Financial institutions need to quantify and control risk. For example, a bank holding options should know by how much their value might change.

Asset allocation and portfolio management — As investors aim to balance returns and risk, they can use forecasts to manage their portfolios. For instance, if volatility is rising and they’re risk-averse, they might shift to safer assets.

Derivative valuation — The prices of derivatives, such as options, are highly sensitive to expected volatility.

The ARCH Model

The Autoregressive Conditional Heteroskedasticity (ARCH) model was introduced by Robert Engle in 1982 and was designed to forecast time-varying volatility. It is based on the concept of volatility clustering mentioned earlier — that is, periods of high volatility are typically followed by more high volatility, and low by low.

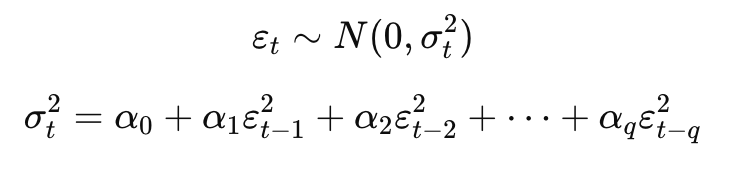

Many time series models (such as ARMA) assume that the variance of the error term is constant over time. However, this assumption is often violated in financial time series. To capture this behavior, the ARCH(q) model expresses the variance of the error term as a function of past squared errors. To be a bit more precise, it assumes that the variance of the errors follows an autoregressive model.

where:

ε_t is the error term at time t,

σ_t^2 is the conditional variance at time t,

α_0 > 0, and α_i ≥ 0 for I > 0 to ensure positive variance.

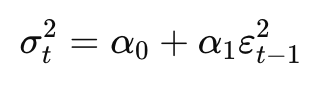

Let’s try to get an intuitive understanding of the formula. In the simplest case, we are dealing with the ARCH(1) model with the following formula:

The formula essentially states that today’s volatility depends on a constant plus the squared return shock from yesterday. So if there’s a large unexpected movement today, tomorrow’s forecasted volatility will be higher.

The biggest strength of the ARCH model is that it produces volatility estimates that exhibit excess kurtosis (fat tails as compared to the Normal distribution), which is in line with the empirical observations about stock returns.

Lastly, it is also important to be aware of the limitations of the ARCH model:

Financial time series often exhibit long memory in volatility, which ARCH cannot effectively model without including many lags.

ARCH ignores the leverage effect, assuming that positive and negative shocks of the same magnitude have identical impacts on volatility.

ARCH does not explain variations in volatility, so the model is likely to over-forecast volatility. That is because it is slow to respond to large, isolated shocks in the returns series.

The Headlines Traders Need Before the Bell

Tired of missing the trades that actually move?

In under five minutes, Elite Trade Club delivers the top stories, market-moving headlines, and stocks to watch — before the open.

Join 200K+ traders who start with a plan, not a scroll.

💻 Hands-On Example in Python

It is time to get our hands a bit dirty and create volatility forecasts using the arch library.

As always, we start by importing the required libraries: requests to download data, pandas for some data wrangling, matplotlib for plotting, and, arch for volatility forecasting.

The api_key file contains our personal API key to Financial Modelling Prep’s API, from where we will download the historical stock prices.

import requests

import pandas as pd

from arch import arch_model

from datetime import datetime

# plotting

import matplotlib.pyplot as plt

# settings

plt.style.use("seaborn-v0_8-colorblind")

plt.rcParams["figure.figsize"] = (16, 8)

# api key

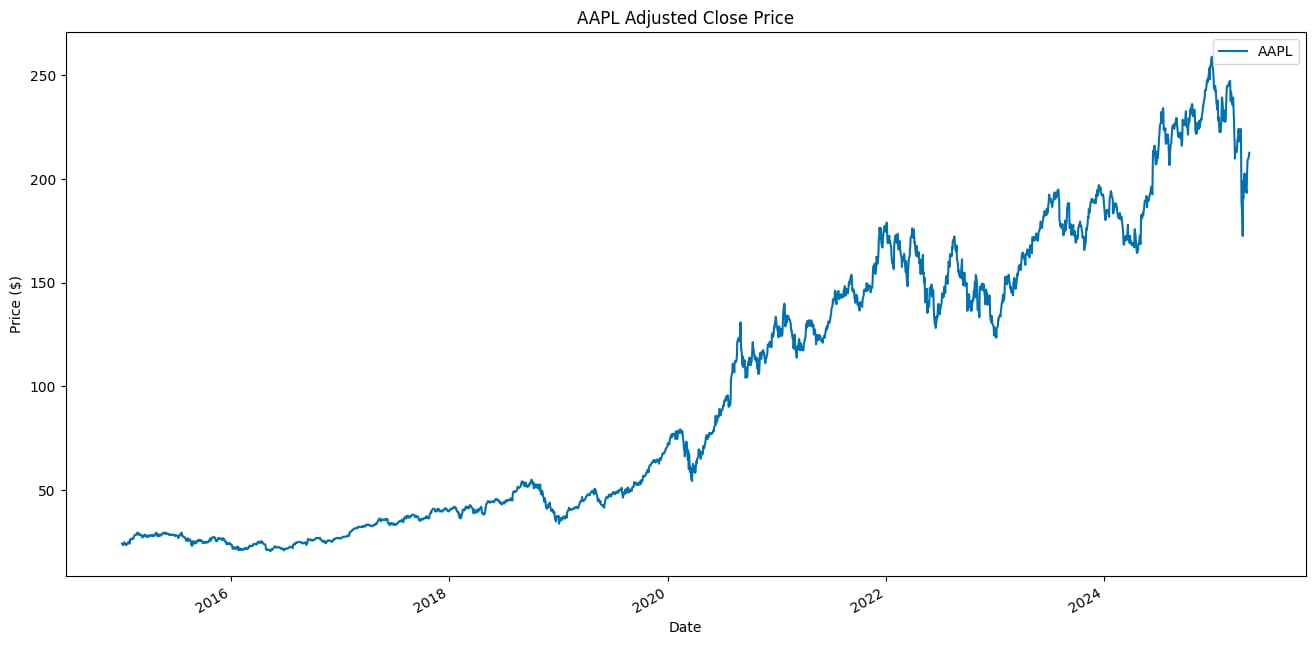

from api_keys import FMP_API_KEYThen, we download the data. For this example, we use Apple’s stock prices from 2015 to April 2025. ARCH models estimate time-varying variance, which is generally more data-hungry than conditional mean models. With too little data, we risk unstable variance estimates and overfitting. Having enough data also helps capture different volatility regimes (for example, crises and quieter periods).

TICKER = "AAPL"

START_DATE = "2015-01-01"

END_DATE = "2025-04-30"

SPLIT_DATE = datetime(2025, 1, 1)

def get_adj_close_price(symbol: str, start_date: str, end_date: str) -> pd.DataFrame:

"""

Fetches adjusted close prices for a given ticker and date range from FMP.

"""

url = f"https://financialmodelingprep.com/api/v3/historical-price-full/{symbol}"

params = {

"from": start_date,

"to": end_date,

"apikey": FMP_API_KEY

}

response = requests.get(url, params=params)

r_json = response.json()

if "historical" not in r_json:

raise ValueError(f"No historical data found for {symbol}")

df = pd.DataFrame(r_json["historical"]).set_index("date").sort_index()

df.index = pd.to_datetime(df.index)

return df[["adjClose"]].rename(columns={"adjClose": symbol})

price_df = get_adj_close_price(TICKER, START_DATE, END_DATE)

price_df.plot(title=f"{TICKER} Adjusted Close Price", ylabel="Price ($)", xlabel="Date")Below we can see the downloaded stock prices of Apple.

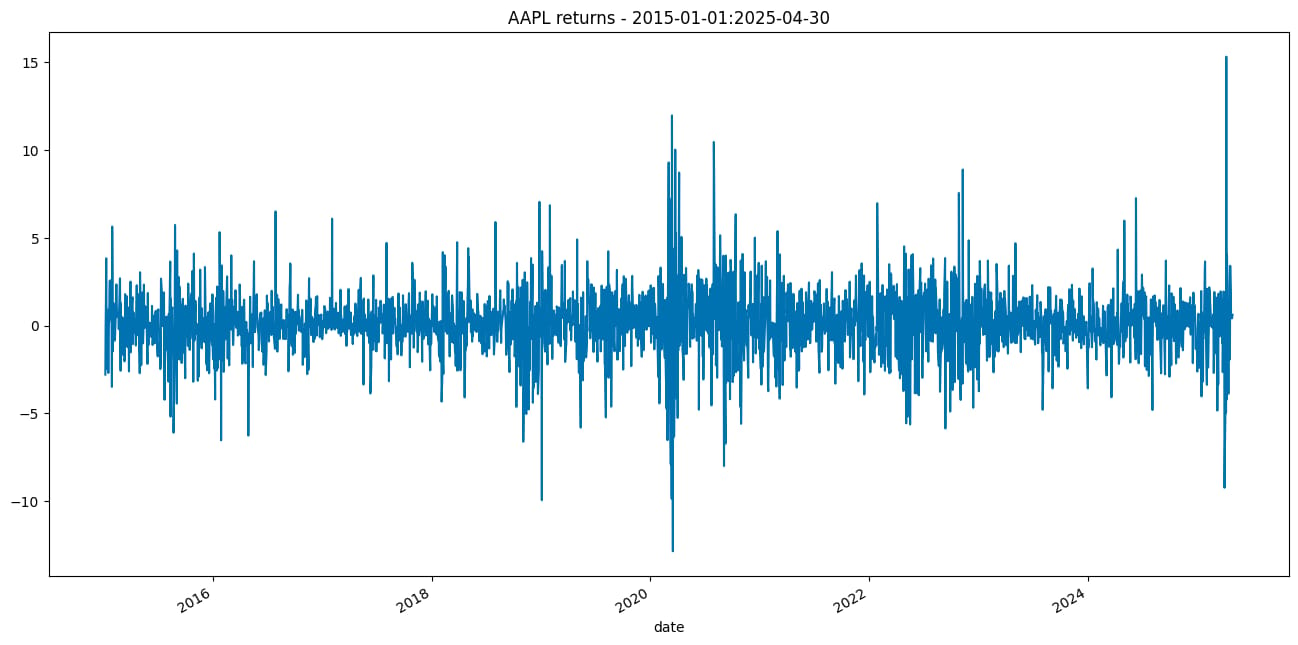

Then, we need to convert the prices to returns.

return_df = 100 * price_df[TICKER].pct_change().dropna()

return_df.name = "asset_returns"

return_df.plot(title=f"{TICKER} returns - {START_DATE}:{END_DATE}");

Our dataset contains 2596 observations.

Thanks to the arch library, estimating ARCH models is as simple as instantiating the model and using the .fit() method. We will train two models, using 1 and 5 autoregressive components.

model_1 = arch_model(return_df, mean="Zero", vol="ARCH", p=1, q=0)

model_2 = arch_model(return_df, mean="Zero", vol="ARCH", p=5, q=0)

fitted_model_1 = model_1.fit(disp="off")

fitted_model_2 = model_2.fit(disp="off")For the mean model, we selected the zero-mean approach, which is suitable for many liquid financial assets. Another possible choice here could be a constant mean.

We can inspect the fitted models by using the summary method.

print(fitted_model_1.summary()) Zero Mean - ARCH Model Results

==============================================================================

Dep. Variable: asset_returns R-squared: 0.000

Mean Model: Zero Mean Adj. R-squared: 0.000

Vol Model: ARCH Log-Likelihood: -5158.24

Distribution: Normal AIC: 10320.5

Method: Maximum Likelihood BIC: 10332.2

No. Observations: 2596

Date: Mon, May 05 2025 Df Residuals: 2596

Time: 13:35:15 Df Model: 0

Volatility Model

========================================================================

coef std err t P>|t| 95.0% Conf. Int.

------------------------------------------------------------------------

omega 2.5171 0.166 15.203 3.374e-52 [ 2.193, 2.842]

alpha[1] 0.2586 5.807e-02 4.453 8.455e-06 [ 0.145, 0.372]

========================================================================

Covariance estimator: robustWe will do this again for the ARCH(5) model to compare the parameter estimates.

Zero Mean - ARCH Model Results

==============================================================================

Dep. Variable: asset_returns R-squared: 0.000

Mean Model: Zero Mean Adj. R-squared: 0.000

Vol Model: ARCH Log-Likelihood: -5030.98

Distribution: Normal AIC: 10074.0

Method: Maximum Likelihood BIC: 10109.1

No. Observations: 2596

Date: Mon, May 05 2025 Df Residuals: 2596

Time: 13:35:15 Df Model: 0

Volatility Model

==========================================================================

coef std err t P>|t| 95.0% Conf. Int.

--------------------------------------------------------------------------

omega 1.4362 0.143 10.033 1.089e-23 [ 1.156, 1.717]

alpha[1] 0.1496 3.811e-02 3.925 8.660e-05 [7.490e-02, 0.224]

alpha[2] 0.0902 3.561e-02 2.532 1.133e-02 [2.038e-02, 0.160]

alpha[3] 0.1232 3.976e-02 3.100 1.938e-03 [4.531e-02, 0.201]

alpha[4] 0.1064 4.501e-02 2.364 1.809e-02 [1.818e-02, 0.195]

alpha[5] 0.1105 3.792e-02 2.915 3.556e-03 [3.622e-02, 0.185]

==========================================================================

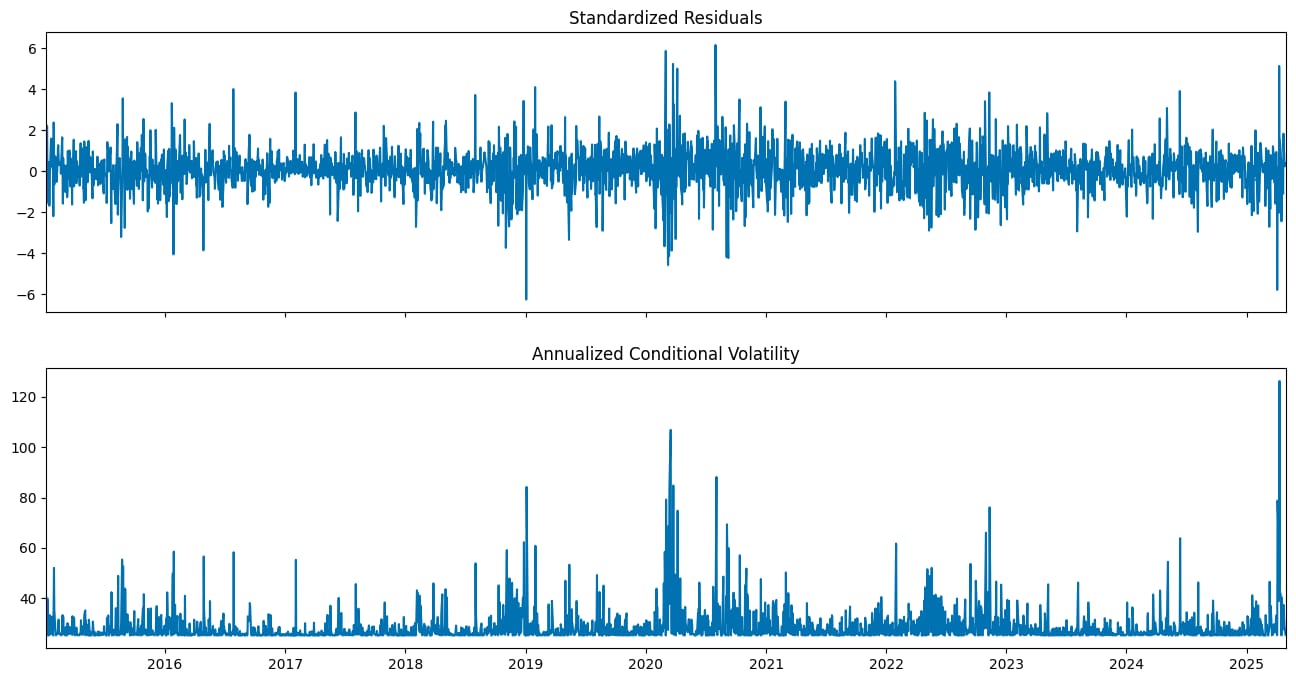

Covariance estimator: robustNow we can inspect the standardized residuals and the conditional volatility series by plotting them. The standardized residuals were computed by dividing the residuals by the conditional volatility.

We use the annualize="D" argument of the plot method in order to annualize the conditional volatility series from daily data.

fitted_model_1.plot(annualize="D");

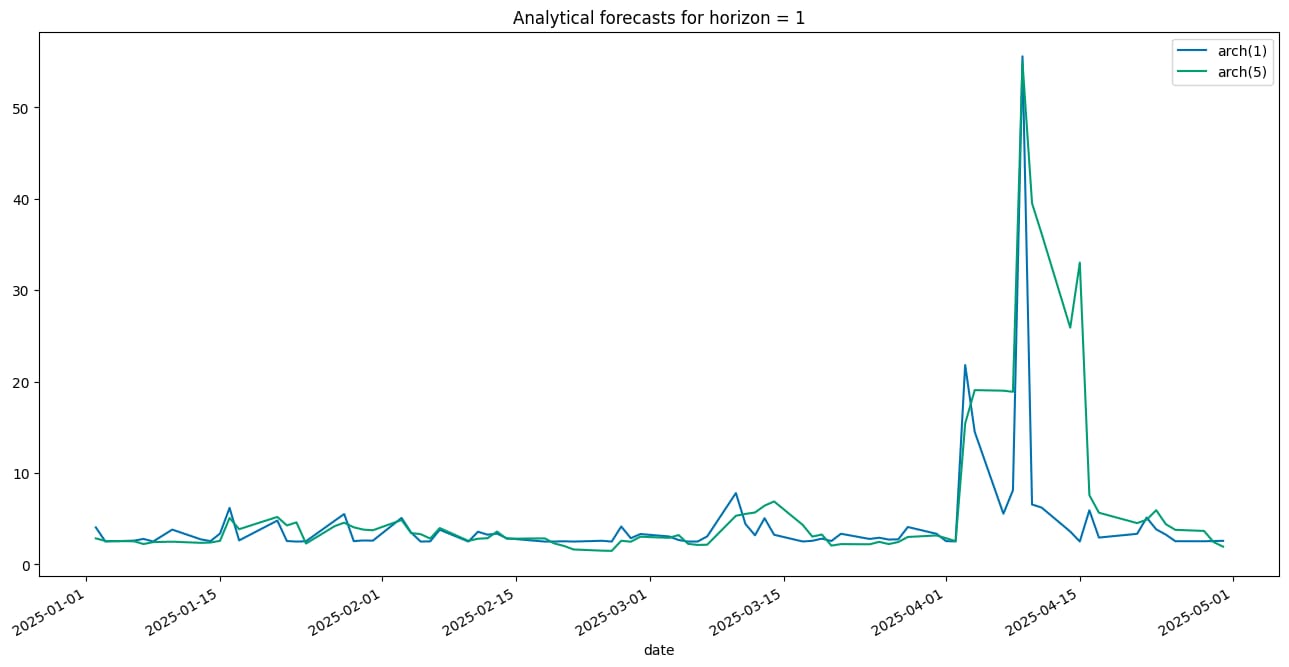

Having estimated the models, it is now time to generate the volatility forecasts. Using the ARCH model family, there are three main approaches to doing so:

Analytical: One-step forecasts are always available due to the model structure. Multi-step forecasts require forward recursion and are only feasible for models that are linear in squared residuals. This method is not suitable for pure ARCH models, but it can be used with their extensions (such as GARCH, HARCH, etc.).

Simulation: This approach simulates future volatility paths using random draws from a specified residual distribution. Averaging these paths yields the forecast. It works for any forecast horizon and converges to the analytical forecast as the number of simulations increases.

Bootstrap (aka, Filtered Historical Simulation): Similar to simulation, but draws standardized residuals from historical data using the estimated model parameters. This method requires only a small amount of in-sample data.

Due to the specification of ARCH class models, the first out-of-sample forecast will always be the same, regardless of which approach we use.

As you can see in the snippet below, we use the analytic method for generating the forecasts, but you could also pass in “simulation” or “bootstrap”.

fitted_model_1 = model_1.fit(last_obs=SPLIT_DATE, disp="off")

fitted_model_2 = model_2.fit(last_obs=SPLIT_DATE, disp="off")

forecasts_analytical_1 = fitted_model_1.forecast(

horizon=1,

start=SPLIT_DATE,

method="analytic",

reindex=False

)

forecasts_analytical_2 = fitted_model_2.forecast(

horizon=1,

start=SPLIT_DATE,

method="analytic",

reindex=False

)

a = forecasts_analytical_1.variance.rename(columns={"h.1": "arch(1)"})

b = forecasts_analytical_2.variance.rename(columns={"h.1": "arch(5)"})

a.join(b).plot(title="Analytical forecasts for horizon = 1");

As you can see in the generated plot, the volatility forecast varies quite a lot depending on the number of autoregressive terms used in estimating the ARCH model.

In the next article on volatility forecasting, we will explore the Generalized Autoregressive Conditional Heteroskedasticity (GARCH) model, an extension of the ARCH model.

Wrapping up

In this article, we have explored volatility forecasting with ARCH models. The main takeaways are:

Volatility forecasting aims to predict the variability of asset returns over time, which is crucial for risk management, derivative pricing, and portfolio allocation.

The ARCH model captures time-varying volatility by modeling the current period’s variance as a function of past squared returns.

It accounts for volatility clustering — periods of high or low volatility persisting over time.

Subscribe to our premium content to read the rest.

Become a paying subscriber to get access to this post and other subscriber-only content.

Upgrade