Money Management Making You Mad?

Most business owners hit revenue goals and still feel cash-strapped.

Not because they're not making money. But because their money flow is broken, their decisions feel urgent instead of strategic, and their systems feel fragile instead of solid.

The Find Your Flow Assessment pinpoints exactly where friction shows up between your business and personal finances.

5 minutes with the Assessment gets you clarity on:

where cash leaks

what slows progress,

whether your current setup actually serves you

No spreadsheets, or pitch. Just actionable insight into what's not working and why.

Educational only. Not investment or tax advice.

🔔 Limited-Time Holiday Deal: 20% Off Our Complete 2026 Playbook! 🔔

Level up before the year ends!

AlgoEdge Insights: 30+ Python-Powered Trading Strategies – The Complete 2026 Playbook

30+ battle-tested algorithmic trading strategies from the AlgoEdge Insights newsletter – fully coded in Python, backtested, and ready to deploy. Your full arsenal for dominating 2026 markets.

Special Promo: Use code DECEMBER2025 for 20% off

Valid only until February 20, 2026 — act fast!

👇 Buy Now & Save 👇

Instant access to every strategy we've shared, plus exclusive extras.

— AlgoEdge Insights Team

🔔 Flash Launch Alert: AlgoEdge Colab Vault – Your 2026 Trading Edge! 🔔

The $79 book gives you ideas on paper.

This gives you 20 ready-to-run money machines in Google Colab.

I've turned my 20 must-have, battle-tested Python strategies into fully executable Google Colab notebooks – ready to run in your browser with one click.

One-click notebooks • Real-time data • Bias-free backtests • Interactive charts • OOS tests • CSV exports • Pro metrics

Test on any ticker instantly (SPY, BTC, PLTR, TSLA, etc.).

Launch Deal (ends Feb 28, 2026):

$129 one-time (save $40 – regular $169 after)

Lifetime access + free 2026 updates.

Inside the Vault (20 powerhouses):

Bias-Free Cubic Poly Trend

3-State HMM Volatility Filter

MACD-RSI Momentum

Bollinger Squeeze Breakout

Supertrend ATR Rider

Ichimoku Cloud

VWAP Scalper

Donchian Breakout

Keltner Reversion

RSI Divergence

MA Ribbon Filter

Kalman Adaptive Trend

ARIMA-GARCH Vol Forecast

LSTM Predictor

Random Forest Regime Classifier

Pairs Cointegration

Monte Carlo Simulator

FinBERT Sentiment

Straddle IV Crush

Fibonacci Retracement

👇 Grab It Before Price Jumps 👇

Buy Now – $129

P.S. Run code today → test live tomorrow → outperform the book readers.

Reply “VAULT” for direct link or questions.

Premium Members – Your Full Notebook Is Ready

The complete Google Colab notebook from today’s article (with live data, full Hidden Markov Model, interactive charts, statistics, and one-click CSV export) is waiting for you.

Preview of what you’ll get:

Inside:

Automatic PLTR (or any ticker) daily data download from 2021 → today via free yfinance

Full polynomial regression demo (degrees 1–4) with R², MAE, RMSE, MAPE metrics & beautiful comparison plots

Bias-free expanding-window cubic (deg=3) regression – no lookahead bias, real walk-forward style

Clear trading signals: long when price > cubic fit and slope > 0, with entry/exit markers

Interactive-style plots: price + trend filter + green/red signals + in-position highlights

Complete backtest vs Buy & Hold: daily/ cumulative returns, 0.1% transaction costs applied

Detailed performance metrics: Total Return, CAGR, Ann. Volatility, Sharpe (rf=0), Max Drawdown

Trade statistics: number of trades, win rate, average trade return

Realistic Out-of-Sample (OOS) test on last ~10% of data + separate metrics & chart

Bonus: change one line to test any symbol (e.g. TSLA, BTC-USD, AAPL, GLD) or adjust degree/window/costs

Free readers – you already got the full breakdown and visuals in the article. Paid members – you get the actual tool.

Not upgraded yet? Fix that in 10 seconds here👇

Google Collab Notebook With Full Code Is Available In the End Of The Article Behind The Paywall 👇 (For Paid Subs Only)

Infographic Overview of Nonlinear Problems in Machine Learning Regression

Scikit-learn support vector regression toy example showing RBF, linear, and polynomial models (replicated by the author).

Third-order polynomial regression for TSLA with a time dummy, generated by the author using the Scikit-learn PolynomialFeatures class.

Synthetic linear and polynomial regression test examples (author-generated).

Non-Linear Regression Analysis: China’s GDP vs normalized Year/max(Year) Example [1, 2].

The present paper is structured as follows:

First, we retrieve the historical PLTR stock data via the EODHD APIs.

Next, we implement the polynomial regression trading strategy.

Finally, both bias-free risk-adjusted backtesting and out-of-sample (OOS) testing for different polynomial orders N=1–4 are employed to assess the strategy’s risk-return performance vs Buy & Hold as a market proxy benchmark.

Now, let’s see how this nonlinear method performs with financial time series data! 🚀📊

Contents

· Download Input Stock Data

· Polynomial Regression Analysis

· Bias-Free Cubic Regression Trading Strategy

· Bias-Free Backtesting vs Market

· Out-of-Sample (OOS) Testing vs Market

· Conclusions

· References 📚

· Explore More 🔍

· Contacts 📬

· Disclaimer 📜

Download Input Stock Data

Retrieving daily PLTR stock data through EODHD APIs and organized them in two different formats for subsequent regression analysis

#EODHD APIs

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

from eodhd import APIClient

import mplfinance as mpf

# --- PARAMETERS ---

symbol = "PLTR"

start_date = "2021-01-01"

end_date = "2026-01-31"

# --- DOWNLOAD PRICE DATA FROM EODHD ---

api = APIClient("YOUR API CODE")

#raw = client.get_end_of_day_historical_stock_market_data(symbol, start=start_date, end=end_date)

resp = api.get_eod_historical_stock_market_data(symbol = symbol, period='d', from_date = start_date, to_date = end_date, order='a')

dfvx = pd.DataFrame(resp)

#dfvx.tail()

data = pd.DataFrame(resp)

data['date'] = pd.to_datetime(data['date'])

data.set_index('date', inplace=True)

data = data[['open', 'high', 'low', 'close','volume']].rename(columns=str.title) # Align with OHLC naming

data.sort_index(inplace=True)

#Format 1

data.tail()

Open High Low Close Volume

date

2026-01-26 168.21000000 170.58970000 167.32500000 167.47000000 22777160

2026-01-27 167.48000000 169.44000000 164.69000000 165.70000000 26542311

2026-01-28 164.40000000 165.04500000 157.24000000 157.35000000 44822848

2026-01-29 157.63000000 157.63000000 147.12000000 151.86000000 59846809

2026-01-30 150.05000000 151.00000000 145.13900000 146.59000000 47271042

datal = pd.DataFrame(resp)

datal['date'] = pd.to_datetime(datal['date'])

#Format 2

datal.tail()

date open high low close adjusted_close volume

1270 2026-01-26 168.21000000 170.58970000 167.32500000 167.47000000 167.47000000 22777160

1271 2026-01-27 167.48000000 169.44000000 164.69000000 165.70000000 165.70000000 26542311

1272 2026-01-28 164.40000000 165.04500000 157.24000000 157.35000000 157.35000000 44822848

1273 2026-01-29 157.63000000 157.63000000 147.12000000 151.86000000 151.86000000 59846809

1274 2026-01-30 150.05000000 151.00000000 145.13900000 146.59000000 146.59000000 47271042

df=datal.copy()Examining the general data structure

data.info()

<class 'pandas.core.frame.DataFrame'>

DatetimeIndex: 1275 entries, 2021-01-04 to 2026-01-30

Data columns (total 5 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 Open 1275 non-null float64

1 High 1275 non-null float64

2 Low 1275 non-null float64

3 Close 1275 non-null float64

4 Volume 1275 non-null int64

dtypes: float64(4), int64(1)

memory usage: 59.8 KBPlotting the PLTR candlesticks from 2021–01–04 to 2026–01–30

# Plot candlesticks

mpf.plot(

data,

type='candle',

volume=True,

title='PLTR Stock Data', figratio=(16, 9),figscale=1.5,

style='yahoo'

)

PLTR candlesticks vs volume from 2021–01–04 to 2026–01–30.

This plot captures PLTR’s rollercoaster-like performance throughout 2025. While PLTR showed a strong bullish trend for much of the year, supported by solid revenue growth, the rally was punctuated by bouts of volatility and profit-taking (typical of high-growth tech stocks). Overall, however, the trend points to sustained investor confidence in PLTR’s long-term growth trajectory.

Wall Street Just Named the Most Crowded Trades of 2026

AI stocks. Metals. Crypto.

Surprise, surprise; gold crashed 16%. Silver plunged 34%. Bitcoin dropped to 1 year lows.

All supposedly "uncorrelated" assets moving in lockstep largely because of overleveraged margin.

JPM strategists warn that the same leverage is still a risk.

Those markets may be recovering now, but cascading liquidations could trigger quickly across several asset classes simultaneously.

So much for diversifying away risk, right?

But get this–

70,819 everyday investors have allocated $1.3 billion fractionally across 500+ exclusive investments.

Not real estate or PE… Blue-chip art. Sounds crazy, right?

Now it’s easy to invest in art featuring legends like Banksy, Basquiat, and Picasso, thanks to Masterworks.

They do the heavy lifting from acquisition to sale, so you can diversify with the strategy typically limited to the ultra-wealthy.

(Past sales delivered net returns like 14.6%, 17.6%, and 17.8% on works held longer than a year.)*

*Investing involves risk. Past performance is not indicative of future returns. Important Reg A disclosures: masterworks.com/cd

Polynomial Regression Analysis

Let’s explore five scenarios of polynomial regression using different values of N from 1 to 4.

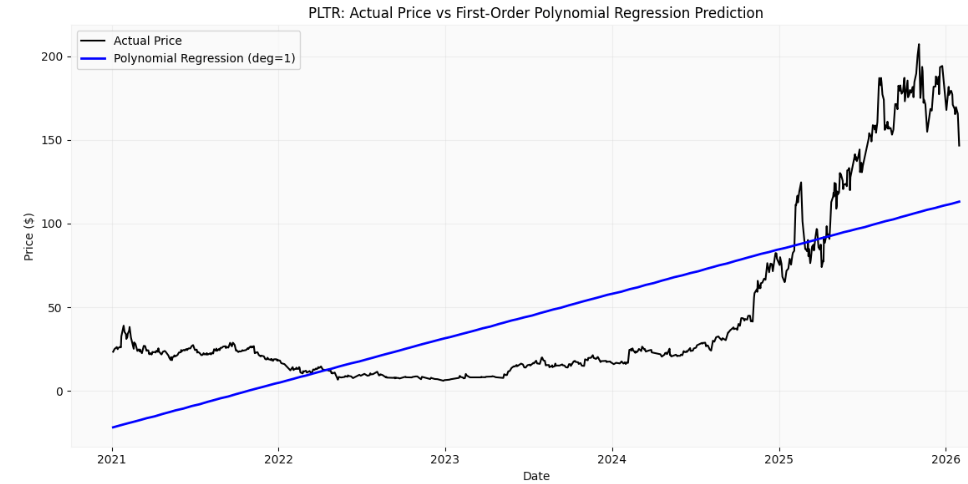

Case 1: N=1 (First-Order or Linear Regression)

Step 0 sets the polynomial order

N=1Step 1 generates a time-based feature, converts it into polynomial features of degree N, fits an N-order regression model to predict the stock’s closing prices, and saves the predicted values in the DataFrame

import numpy as np

from sklearn.preprocessing import PolynomialFeatures

from sklearn.linear_model import LinearRegression

# Time index

df["t"] = np.arange(len(df))

X = df[["t"]]

y = df["close"]

poly = PolynomialFeatures(degree=N)

X_poly = poly.fit_transform(X)

model = LinearRegression()

model.fit(X_poly, y)

df["poly_fit"] = model.predict(X_poly)Step 2 conducts quality control (QC) analysis on the regression results

import matplotlib.pyplot as plt

plt.figure(figsize=(12, 6))

# Actual prices

plt.plot(df["date"], df["close"],

label="Actual Price",

color="black",

linewidth=1.5)

# Polynomial predictions

plt.plot(df["date"], df["poly_fit"],

label="Polynomial Regression (deg=1)",

color="blue",

linewidth=2)

plt.title("PLTR: Actual Price vs Polynomial Regression Prediction")

plt.xlabel("Date")

plt.ylabel("Price ($)")

plt.legend()

plt.grid(alpha=0.3)

plt.tight_layout()

plt.show()

PLTR: Actual Price vs First-Order Polynomial Regression Prediction

from sklearn.metrics import r2_score

y_test=df["close"]

y_preds=df["poly_fit"]

r2 = r2_score(y_test, y_preds)

r2

0.5301551809775094

from sklearn.metrics import mean_absolute_error

mae = mean_absolute_error(y_test, y_preds)

mae

31.671081407432062

from sklearn.metrics import mean_squared_error

mse = mean_squared_error(y_test,

y_preds)

rmse=np.sqrt(mse)

rmse

36.67741116165101

from sklearn.metrics import mean_absolute_percentage_error

mape=mean_absolute_percentage_error(y_test, y_preds)

mape

1.3836612746258175Let’s take a closer look at the metrics above.

R2 ~ 53%. About half of PLTR’s price movement over 2021–2026 is explained by the model. That means the line follows the general upward trend, but it clearly misses a lot of the day-to-day and month-to-month swings. For a single straight line over five years of stock data, this is pretty typical.

MAE ~ 31.7. On average, the model’s predictions are off by about $32.

That’s a big miss in absolute dollars, which tells you the model isn’t meant for precise price prediction — it’s more of a rough trend indicator than a forecasting tool.RMSE ~ 36.7 being higher than MAE means the model makes some very large errors, especially during spikes in volatility. This reflects how badly a straight line handles sudden regime changes, which PLTR definitely had between 2021 and 2026.

MAPE ~ 1.38 (138%) looks terrible, but it’s not very informative here. MAPE blows up when prices are low or when errors are large relative to price — both of which happen often in volatile stocks. In long-horizon stock models, MAPE usually overstates how bad the model really is.

Bottom Line: The model does a decent job picking up PLTR’s overall long-term direction, but it’s not very good at predicting actual prices. That’s pretty normal for a simple linear model when you apply it to a volatile stock over a long time span like five years or more.

Case 2: N=2 (Second-Order or Quadratic Regression)

Step 0 sets the polynomial order

N=2Repeat Steps 1–2

PLTR: Actual Price vs Second-Order Polynomial Regression Prediction

r2

0.9260435495814011

mae

12.04259941966093

rmse

14.551557578958798

mape

0.5335381048023552Looking at the linear and quadratic regression metrics side by side:

R2 goes from 0.53 with the linear model up to 0.93 with the quadratic model, meaning the quadratic model explains a lot more of the variation in PLTR’s prices.

MAE drops from about $32 to $12, cutting mistakes by nearly 60%. While it’s still not accurate enough for trading, it gives a much clearer picture of the overall trend.

RMSE falls from $37 to $14.5, so the polynomial model deals with extreme swings (e.g. 2021 highs, 2022 drawdown, later recovery) much better and avoids the huge misses we saw with the linear model.

MAPE goes from 138% down to 53%, so while it’s not perfect, the quadratic model does a much better job keeping errors under control across the price range.

Bottom Line: The second-order polynomial model is much better at fitting PLTR’s long-term price path. It captures curvature and regime changes that a straight line simply can’t, while still focusing on the overall trend rather than short-term noise.

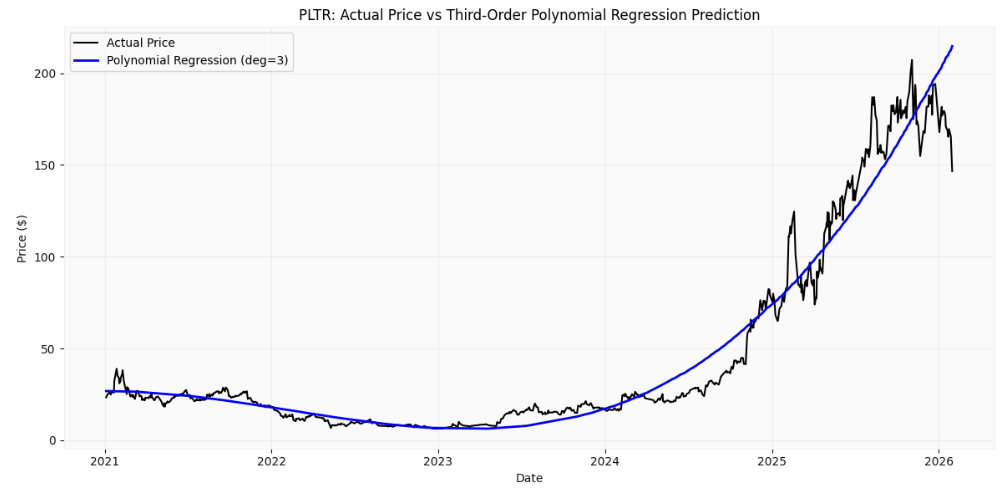

Case 3: N=3 (Third-Order or Cubic Regression)

Step 0 sets the polynomial order

N=3Repeat Steps 1–2

PLTR: Actual Price vs Third-Order Polynomial Regression Prediction

r2

0.9614082295622388

mae

6.769918384244434

rmse

10.511599463793297

mape

0.197197611386881Comparing PLTR’s second-order vs third-order polynomial regression performance:

Switching from quadratic to cubic, the model’s R2 goes from 0.93 to 0.96, explaining over 96 % of PLTR’s price variation. It does a better job of following the relatively small fluctuations in the long-term trend.

MAE drops by almost half, going from about $12 to around $6.8. Predictions are now much closer to the real prices, so the model follows the trend a lot better without big misses.

RMSE gets a lot smaller, dropping from about $14.5 to around $10.5. This shows the cubic model handles sharp spikes and drops much better than the quadratic one.

MAPE drops a lot, going from around 53% down to about 20%. The model now handles different price levels much better and fits the actual data much more closely.

Stop Drowning In AI Information Overload

Your inbox is flooded with newsletters. Your feed is chaos. Somewhere in that noise are the insights that could transform your work—but who has time to find them?

The Deep View solves this. We read everything, analyze what matters, and deliver only the intelligence you need. No duplicate stories, no filler content, no wasted time. Just the essential AI developments that impact your industry, explained clearly and concisely.

Replace hours of scattered reading with five focused minutes. While others scramble to keep up, you'll stay ahead of developments that matter. 600,000+ professionals at top companies have already made this switch.

Bottom Line: Stepping up from quadratic to cubic really cleans things up. The model makes fewer mistakes, tracks the big swings in PLTR’s long-term trend better, and explains way more of the price action without overfitting the data.

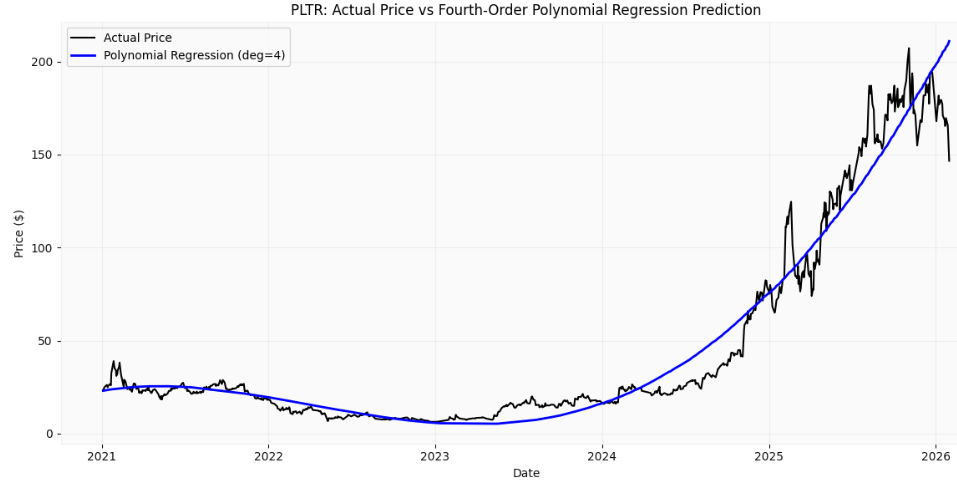

Case 4: N=4 (Fourth-Order or Quartic Regression)

Step 0 sets the polynomial order

N=4Repeat Steps 1–2

PLTR: Actual Price vs Fourth-Order Polynomial Regression Prediction

r2

0.961970651831595

mae

7.018238808224246

rmse

10.434722248369546

mape

0.22795328820416313It appears that the fourth-order model is showing clear signs of overfitting. Here’s why:

R2 goes from 0.961 (cubic) to 0.962 (quartic). That tiny change means the extra complexity isn’t really helping the model capture more of the trend.

MAE actually edges up slightly, from roughly $6.77 to $7.02. So on a per-trade basis, predictions aren’t any more accurate.

RMSE is basically the same, dropping slightly from $10.51 to $10.43, which isn’t a meaningful difference.

MAPE rises a little from 19.7% to 22.8%, showing that adding the fourth-order term doesn’t really improve scaling across price levels.

Bottom Line: Moving from cubic to fourth-order gives almost no real benefit. The R2 barely improves, errors don’t get smaller, and the model starts showing signs of overfitting rather than actually following the trend better. For PLTR’s long-term data, cubic seems to hit the sweet spot.

Bias-Free Cubic Regression Trading Strategy

In this section, we turn our attention to building a long trading strategy using the cubic regression model. The idea is straightforward: we follow the trend. When PLTR’s price is above the cubic regression line and the slope is pointing up, we take it as a signal to go long. Basically, we’re riding the stock when it’s strong and staying out when it’s weak — letting the model’s curve guide our entries.

Current issue with regression fitting

The regression fitting procedure shown above cannot be directly applied to a real trading scenario due to lookahead bias. When the regression is trained on the full 2021–2026 dataset, the resulting trading signals implicitly incorporate future price information that would not have been available at the time.

Rolling/expanding regression as bias-free solution

Instead of fitting the cubic polynomial to the entire dataset, we only fit it to historical data up to the current day. With an expanding window, the regression is fit using all available data up to the current bar, while a rolling window limits the fit to only the most recent n>2 bars. In both cases, the slope and entry or exit signals are computed strictly from past regression values, ensuring the strategy relies only on information that would have been available at the time.

Implementing the expanding cubic regression without lookahead bias

import pandas as pd

import numpy as np

from sklearn.preprocessing import PolynomialFeatures

from sklearn.linear_model import LinearRegression

# Assume df has a 'close' column

#setting up a cubic regression to model the trend

window = None # Expanding window; use an integer for rolling window

degree = 3 # Cubic regression

# Store regression predictions

df["poly_fit"] = np.nan

#poly_fit will store the trend value the model predicts at each bar.

#Rolling through time (no lookahead)

#Choosing historical data only

#If you used a rolling window, you’d only look back N bars.

#Since this is expanding, you use everything from the start up to now.

#No future prices are included

for i in range(3, len(df)):

if window:

start = max(0, i-window)

else:

start = 0 # expanding window

#Walk forward one bar at a time, just like real trading.

#Start at index 3 because cubic regression needs a few points to work.

X = np.arange(start, i).reshape(-1, 1)

y = df["close"].iloc[start:i].values

#X is just a time index.

#y is the historical closing prices.

#This is the data our model would realistically have seen at that moment.

# Fit cubic regression

poly = PolynomialFeatures(degree=degree)

X_poly = poly.fit_transform(X)

model = LinearRegression()

model.fit(X_poly, y)

#We fit a cubic curve to past prices.

#This smooths out noise and captures the bigger trend shape.

# Predict for current point only

X_current = poly.transform(np.array([[i]]))

df.at[i, "poly_fit"] = model.predict(X_current)

#We ask the model: Based on what you know so far, where should price be right now?

#That predicted value becomes our trend line at time i.Calculating the slope of our polynomial regression line

# First derivative (slope = trend direction)

df["reg_slope"] = df["poly_fit"].diff()

#If the regression value is rising, the slope is positive.

#This acts as a trend filter so you don’t trade against momentum.Defining the entry and exit conditions

# Entry & exit rules

df["reg_long_signal"] = (

(df["close"] > df["poly_fit"]) &

(df["reg_slope"] > 0)

)

'''

You go long when Price is above the trend line, and

the trend line is sloping upward.

Price is strong and the trend agrees.

'''

Converts our True/False signals into 1s and 0s

df["position"] = df["reg_long_signal"].astype(int)This flags the exact bar where we enter the trade

df["entry"] = (df["position"] == 1) & (df["position"].shift(1) == 0) #Using .shift(1) is correct.

df["exit"] = (df["position"] == 0) & (df["position"].shift(1) == 1)

# No lookahead introduced.Visualizing the trading signals overlaid on the closing price

import matplotlib.pyplot as plt

plt.figure(figsize=(13, 6))

# Price

plt.plot(df["date"], df["close"],

label="Price",

color="black",

linewidth=1.5,alpha=0.6)

# Poly filter

plt.plot(df["date"], df["poly_fit"],

label="Poly Fit Trend Filter",

color="blue",

linewidth=2)

# Entry signals

plt.scatter(df.loc[df["entry"], "date"],

df.loc[df["entry"], "close"],

marker="^",

color="green",

s=90,

label="Long Entry")

# Exit signals

plt.scatter(df.loc[df["exit"], "date"],

df.loc[df["exit"], "close"],

marker="v",

color="red",

s=90,

label="Exit")

# Highlight time in position

plt.fill_between(

df["date"],

df["close"].min(),

df["close"].max(),

where=df["position"] == 1,

color="green",

alpha=0.08,

transform=plt.gca().get_xaxis_transform(),

#label="In Position"

)

plt.title("PLTR – Bias-Free Poly Fit Deg=3 Trading Signals")

plt.xlabel("Date")

plt.ylabel("Price ($)")

plt.legend(loc="upper left")

plt.grid(alpha=0.3)

plt.tight_layout()

plt.show()

PLTR — Bias-Free Poly Fit Deg=3 Trading Signals

Takeaway

To keep things realistic and avoid lookahead bias, the cubic regression is run in a walk-forward way, using only the price data that would have been known at the time. On each bar, the model gives us a smoothed view of the trend and tells us whether it’s pointing up or down. We go long when price is above the regression line and the trend is rising, which signals strength in the direction of the bigger move. Entries and exits happen automatically as these conditions change, letting the strategy ride the long-term trend without peeking into the future.

Bias-Free Backtesting vs Market

In this part, we backtest the strategy by looking at daily and cumulative returns, factoring in a small transaction cost of 0.1%. We also compare it to a simple Buy & Hold approach. To get a fuller picture of performance, we check metrics like CAGR, annualized volatility, Sharpe ratio (assuming zero risk-free rate), and maximum drawdown.

# Transaction cost per trade

transaction_cost = 0.001 # 0.1% per trade

# Compute daily returns

df["daily_return"] = df["close"].pct_change().fillna(0)

# Build position from available entry/exit signals as an example

df["position_from_signals"] = 0

for i in range(1, len(df)):

if df.at[i, "entry"]:

df.at[i, "position_from_signals"] = 1

elif df.at[i, "exit"]:

df.at[i, "position_from_signals"] = 0

else:

df.at[i, "position_from_signals"] = df.at[i - 1, "position_from_signals"]

# Strategy daily returns

df["strategy_return"] = df["daily_return"] * df["position_from_signals"]

# Detect trades for transaction costs

df["trade"] = df["entry"].astype(int) + df["exit"].astype(int)

# Apply transaction costs

df["strategy_return_tc"] = df["strategy_return"] - df["trade"] * transaction_cost

# Cumulative returns

df["cum_strategy"] = (1 + df["strategy_return"]).cumprod()

df["cum_strategy_tc"] = (1 + df["strategy_return_tc"]).cumprod()

df["cum_buy_hold"] = (1 + df["daily_return"]).cumprod()Visualizing strategy cumulative returns vs Buy & Hold

import matplotlib.pyplot as plt

plt.figure(figsize=(13, 6))

plt.plot(df["date"], df["cum_buy_hold"],

label="Buy & Hold",

color="orange",

linewidth=2)

plt.plot(df["date"], df["cum_strategy"],

label="Poly Fit Long-Only Strategy",

color="green",

linewidth=2)

plt.plot(df["date"], df["cum_strategy_tc"],

label="Poly Fit Long-Only + Transaction Costs",

color="blue",

linewidth=2)

plt.title("PLTR Backtest: Poly Fit Strategy vs Buy & Hold")

plt.xlabel("Date")

plt.ylabel("Cumulative Returns ($)")

plt.legend()

plt.grid(alpha=0.3)

plt.tight_layout()

plt.show()

PLTR Backtest: Poly Fit Strategy vs Buy & Hold

Comparing total returns of Poly Fit Strategy (with costs) vs. Buy & Hold

total_return_strategy_tc = df["cum_strategy_tc"].iloc[-1] - 1

total_return_buy_hold = df["cum_buy_hold"].iloc[-1] - 1

print(f"Total Return - Poly Fit Strategy (with cost): {total_return_strategy_tc:.2%}")

print(f"Total Return - Buy & Hold: {total_return_buy_hold:.2%}")

Total Return - Poly Fit Strategy (with cost): 2033.68%

Total Return - Buy & Hold: 527.26%Putting all the risk-return metrics together for comparison

# --- Total Return ---

total_return_strategy = df["cum_strategy_tc"].iloc[-1] - 1

total_return_bh = df["cum_buy_hold"].iloc[-1] - 1

# --- CAGR / Annualized Return ---

days = len(df)

annual_factor = 252 / days

cagr_strategy = (1 + total_return_strategy) ** annual_factor - 1

cagr_bh = (1 + total_return_bh) ** annual_factor - 1

# --- Annualized Volatility ---

vol_strategy = df["strategy_return_tc"].std() * np.sqrt(252)

vol_bh = df["daily_return"].std() * np.sqrt(252)

# --- Sharpe Ratio (assume rf=0) ---

sharpe_strategy = cagr_strategy / vol_strategy

sharpe_bh = cagr_bh / vol_bh

# --- Max Drawdown ---

def max_drawdown(cum_returns):

roll_max = cum_returns.cummax()

drawdown = (cum_returns - roll_max) / roll_max

return drawdown.min()

mdd_strategy = max_drawdown(df["cum_strategy_tc"])

mdd_bh = max_drawdown(df["cum_buy_hold"])

# --- Print results ---

print("=== Strategy Performance Metrics ===")

print(f"Total Return: {total_return_strategy:.2%}")

print(f"CAGR: {cagr_strategy:.2%}")

print(f"Annualized Volatility: {vol_strategy:.2%}")

print(f"Sharpe Ratio: {sharpe_strategy:.2f}")

print(f"Max Drawdown: {mdd_strategy:.2%}")

print("\n=== Buy & Hold Metrics ===")

print(f"Total Return: {total_return_bh:.2%}")

print(f"CAGR: {cagr_bh:.2%}")

print(f"Annualized Volatility: {vol_bh:.2%}")

print(f"Sharpe Ratio: {sharpe_bh:.2f}")

print(f"Max Drawdown: {mdd_bh:.2%}")

=== Strategy Performance Metrics ===

Total Return: 2033.68%

CAGR: 83.10%

Annualized Volatility: 41.26%

Sharpe Ratio: 2.01

Max Drawdown: -50.67%

=== Buy & Hold Metrics ===

Total Return: 527.26%

CAGR: 43.75%

Annualized Volatility: 67.60%

Sharpe Ratio: 0.65

Max Drawdown: -84.62%Estimating the total number of trades, win rate, and average trade return

# Identify trades

trades = df[df["position"].diff().abs() == 1].index

trade_returns = []

for i in range(0, len(trades), 2):

entry = trades[i]

exit_ = trades[i+1] if i+1 < len(trades) else len(df)-1

trade_ret = (df["close"].iloc[exit_] / df["close"].iloc[entry]) - 1

trade_returns.append(trade_ret)

trade_returns = np.array(trade_returns)

win_rate = np.mean(trade_returns > 0)

avg_trade_ret = np.mean(trade_returns)

print(f"Number of Trades: {len(trade_returns)}")

print(f"Win Rate: {win_rate:.2%}")

print(f"Average Trade Return: {avg_trade_ret:.2%}")

Number of Trades: 48

Win Rate: 25.00%

Average Trade Return: 1.89%The Poly Fit strategy clearly outperforms Buy & Hold across the board. Total returns are much higher at 2034% vs 527%, and the CAGR is almost double (83% vs 44%), showing the strategy compounds faster over time.

At the same time, it manages risk better. Volatility is lower (41% vs 68%), the Sharpe ratio is way stronger (2.01 vs 0.65), and maximum drawdown is far smaller (-51% vs -85%).

The strategy keeps it moderate with 48 trades, taking enough action to capture moves without overtrading.

Only one in four trades wins at a 25% win rate, which might sound low but is normal for trend-following or regression based strategies. The big winners outweigh the many small losses, which is why the overall returns are so strong.

On average, each trade adds about 1.9% to the portfolio, and over multiple trades this small edge compounds into substantial cumulative gains.

Takeaways

The strategy not only makes more money but does so with less wild swings and smaller crashes than just Buy&Hold.

Even with a low win rate, the strategy works because it lets winners run and cuts losses quickly, capturing the bigger moves in the trend rather than trying to be right all the time.

Out-of-Sample (OOS) Testing vs Market

While backtesting offers insights into historical performance, out-of-sample (OOS) testing helps mitigate the risk of overfitting by providing a more realistic assessment of a strategy’s adaptability and greater confidence in its future performance.

In this part, we split the price data into in-sample (IS) and out-of-sample (OOS) chunks based on time. We then backtest the strategy on the OOS segment and compare its performance to Buy & Hold using the same approach as before.

Using a time-based train/test split to partition the price data into IS and OOS periods

split_ratio = 0.9

split_index = int(len(df) * split_ratio)

# In-sample

df_is = df.iloc[:split_index].copy()

# Out-of-sample

df_oos = df.iloc[split_index:].copy()Fitting the cubic regression model and computing the regression slope

import numpy as np

from sklearn.preprocessing import PolynomialFeatures

from sklearn.linear_model import LinearRegression

# Time index

df_oos["t"] = np.arange(len(df_oos))

X = df_oos[["t"]]

y = df_oos["close"]

poly = PolynomialFeatures(degree=3)

X_poly = poly.fit_transform(X)

model = LinearRegression()

model.fit(X_poly, y)

df_oos["poly_fit"] = model.predict(X_poly)

df_oos["reg_slope"] = df_oos["poly_fit"].diff()Defining the position

df_oos["reg_long_signal"] = (

(df_oos["close"] > df_oos["poly_fit"]) &

(df_oos["reg_slope"] > 0)

)

df_oos["position"] = df_oos["reg_long_signal"].astype(int)Calculating the strategy returns with transaction costs

transaction_cost = 0.001

df_oos["daily_return"] = df_oos["close"].pct_change().fillna(0)

df_oos["strategy_return"] = df_oos["daily_return"] * df_oos["position"]

# Detect trades

df_oos["trade"] = df_oos["position"].diff().abs()

df_oos["strategy_return"] -= df_oos["trade"] * transaction_cost

# Cumulative returns

df_oos["cum_strategy"] = (1 + df_oos["strategy_return"]).cumprod()

df_oos["cum_buy_hold"] = (1 + df_oos["daily_return"]).cumprod()Plotting cumulative returns of the strategy vs Buy & Hold

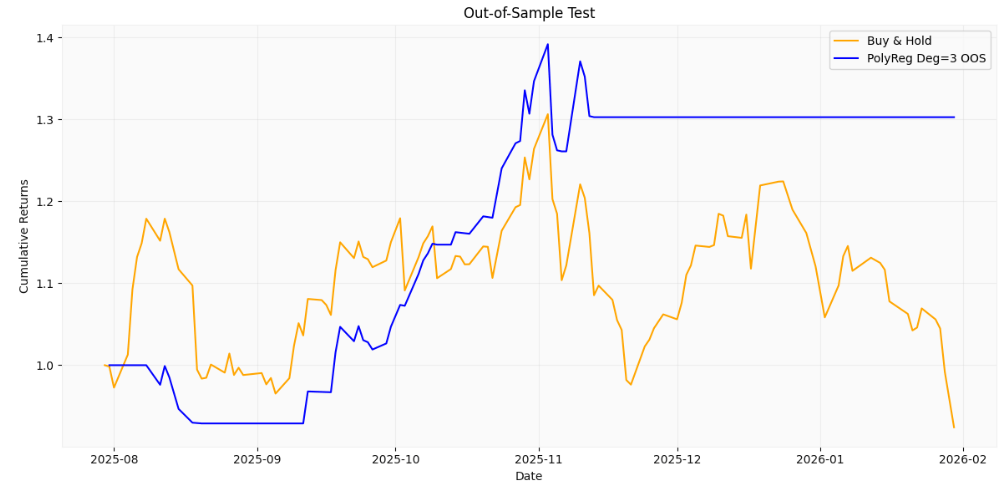

import matplotlib.pyplot as plt

plt.figure(figsize=(12, 6))

plt.plot(df_oos["date"], df_oos["cum_buy_hold"], label="Buy & Hold", color="orange")

plt.plot(df_oos["date"], df_oos["cum_strategy"], label="PolyReg Deg=3 OOS", color="blue")

plt.title("Out-of-Sample Test")

plt.xlabel("Date")

plt.ylabel("Cumulative Returns")

plt.legend()

plt.grid(alpha=0.3)

plt.tight_layout()

plt.show()

OOS backtesting: Cumulative returns of the strategy (with transaction costs) versus Buy & Hold

Comparison of additional performance metrics, following the approach used in backtesting

# --- Total Return ---

total_return_strategy = df_oos["cum_strategy"].iloc[-1] - 1

total_return_bh = df_oos["cum_buy_hold"].iloc[-1] - 1

# --- CAGR / Annualized Return ---

days = len(df)

annual_factor = 252 / days

cagr_strategy = (1 + total_return_strategy) ** annual_factor - 1

cagr_bh = (1 + total_return_bh) ** annual_factor - 1

# --- Annualized Volatility ---

vol_strategy = df_oos["strategy_return"].std() * np.sqrt(252)

vol_bh = df_oos["daily_return"].std() * np.sqrt(252)

# --- Sharpe Ratio (assume rf=0) ---

sharpe_strategy = cagr_strategy / vol_strategy

sharpe_bh = cagr_bh / vol_bh

# --- Max Drawdown ---

def max_drawdown(cum_returns):

roll_max = cum_returns.cummax()

drawdown = (cum_returns - roll_max) / roll_max

return drawdown.min()

mdd_strategy = max_drawdown(df_oos["cum_strategy"])

mdd_bh = max_drawdown(df_oos["cum_buy_hold"])

# --- Print results ---

print("=== Strategy Performance Metrics ===")

print(f"Total Return: {total_return_strategy:.2%}")

print(f"CAGR: {cagr_strategy:.2%}")

print(f"Annualized Volatility: {vol_strategy:.2%}")

print(f"Sharpe Ratio: {sharpe_strategy:.2f}")

print(f"Max Drawdown: {mdd_strategy:.2%}")

print("\n=== Buy & Hold Metrics ===")

print(f"Total Return: {total_return_bh:.2%}")

print(f"CAGR: {cagr_bh:.2%}")

print(f"Annualized Volatility: {vol_bh:.2%}")

print(f"Sharpe Ratio: {sharpe_bh:.2f}")

print(f"Max Drawdown: {mdd_bh:.2%}")

=== Strategy Performance Metrics ===

Total Return: 30.25%

CAGR: 5.36%

Annualized Volatility: 25.80%

Sharpe Ratio: 0.21

Max Drawdown: -9.40%

=== Buy & Hold Metrics ===

Total Return: -7.58%

CAGR: -1.55%

Annualized Volatility: 47.36%

Sharpe Ratio: -0.03

Max Drawdown: -29.25%The strategy clearly outperforms Buy & Hold in this out-of-sample period. It delivered a positive total return of 30% with a CAGR of 5.4%, while Buy & Hold actually lost around 7.6% over the same timeframe.

In terms of risk, the strategy is much smoother: annualized volatility is only 25.8% versus 47.4% for Buy & Hold, and the maximum drawdown is significantly smaller at -9.4% compared to -29.3%. The Sharpe ratio is positive (0.21) for the strategy, while Buy & Hold’s is slightly negative, highlighting that the strategy generated risk-adjusted gains despite low overall returns.

Bottom Line: Even though the gains aren’t huge in absolute terms, the strategy provides a safer ride, protecting capital and keeping drawdowns low, which is especially valuable in choppy or down markets.

Conclusions

In this paper, we explored how to build profitable long-only, trend-following trading strategies using polynomial regression in Python.

The input data consisted of daily PLTR stock prices from January 4, 2021, to January 30, 2026, sourced via the EODHD APIs.

We began by analyzing five polynomial regression scenarios, testing different polynomial orders from 1 up to 4. Results showed that the cubic model seems to hit the sweet spot in terms of R2, MAE, RMSE, and MAPE performance metrics.

Next, we put a long trading strategy into practice using the cubic regression model, carefully designed to avoid any lookahead bias.

Finally, we backtested the strategy by tracking daily and cumulative returns while accounting for a modest 0.1% transaction cost. We compared the results to a straightforward Buy & Hold approach and assessed overall performance using metrics such as CAGR, annualized volatility, Sharpe ratio (with a zero risk-free rate), and maximum drawdown.

To get a more realistic sense of how the strategy might perform in practice, we split the price data into time-based in-sample (IS) and out-of-sample (OOS) segments. We then backtested the strategy on the OOS segment and compared its performance to Buy & Hold using the same approach as before.

Backtesting results have confirmed that the strategy not only earns more than Buy & Hold but also does so with smaller swings and less severe drawdowns. Even with a low win rate, it works because it lets winners run and cuts losses quickly, capturing the bigger trend moves rather than trying to be right on every trade.

OOS tests have shown that, even though the gains aren’t huge in absolute terms, the strategy provides a safer ride, protecting capital and keeping drawdowns low, which is especially valuable in choppy or down markets.

To wrap up, the numerical results provide evidence that carefully designed regression-based strategies can deliver solid returns while mitigating downside risk.

This study provides insights for future trend-following frameworks, emphasizing the importance of comprehensive bias-free backtesting, OOS testing, and Walk-Forward Optimization (WFO).

Subscribe to our premium content to read the rest.

Become a paying subscriber to get access to this post and other subscriber-only content.

Upgrade